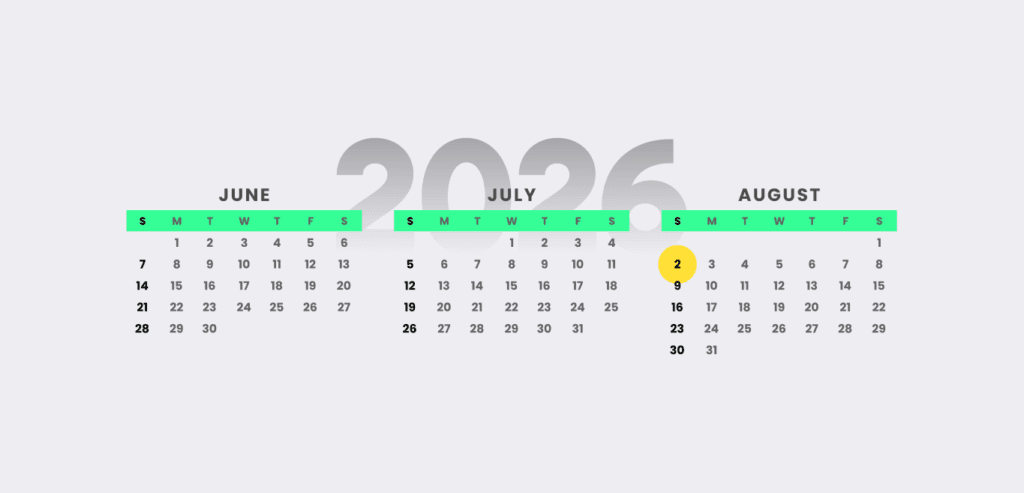

The EU’s AI Act is reaching the final stage in its tortuously long journey from legislative proposal to enforceable law. It’s been a long time coming. The act came into force on August 1, 2024, with a staggered implementation leading to numerous deadlines over the succeeding months and years. Unless a much publicised Digital Omnibus proposal is approved soon by the European Parliament, a key deadline will arrive on August 2, 2026.

UK businesses developing or using high-risk systems in the region have until then to get their AI governance in order. Regulators will want to see evidence of control, not just assertions of compliance. For some organisations, this may require a significant cultural shift. But pragmatic best practice standards like ISO 42001 can get them there.

The Story So Far

Since it passed into law, various aspects of the act have come into force. Most notably, in February 2025 we saw new literacy requirements for staff take effect, governance structures such as the AI Office and AI Board launch, and the prohibition of AI systems that pose “unacceptable risks”. However, under the act’s risk-based approach, this is the easy bit. With systems posing unacceptable risks banned, and no new rules for those judged to pose minimal or no risk (e.g. spam filters), the focus turns to “limited” and “high-risk” systems.

Those judged limited risk (like chatbots and some deepfake generators) will need to “ensure that humans are informed when necessary to preserve trust”, according to the European Commission. But it is high-risk systems where the biggest compliance challenges lie.

Under the Compliance Microscope

As we’ve previously explained, high-risk systems are those used in areas like biometric identification, critical sectors like healthcare, education and employment (where AI decisions can impact people’s lives) and essential infrastructure (e.g. energy grids and transportation systems). Among the use cases the commission cites are exam scoring, robot-assisted surgery, CV sorting, credit scoring, and software used to make visa decisions and prepare court rulings.

These will be subject to strict obligations before they can be put on the market, namely:

- Continuous risk assessment and mitigation

- High quality datasets to train and test the AI

- Detailed logging of activity and documentation

- Clear information to be provided to the deployer (organisations using the models)

- Appropriate human oversight

- High levels of “robustness, security and accuracy”

These obligations are complicated somewhat by the different requirements asked of providers (model developers), deployers (users), importers and distributors. These sound clear on paper. But a deployer will be classed as a provider if they substantially modify or change the intended purpose of a system. Complicating matters further, organisations might occupy more than one role simultaneously. Think of a SaaS business that takes a third-party foundation model, fine-tunes it, and then deploys to customers across multiple jurisdictions.

Starting with Visibility

All of which makes AI governance non-negotiable. But what should best practice strategy include, in this fast-evolving market? For PSE Consulting managing director, Chris Jones, visibility should be the first priority.

“Many businesses are already using AI in small, distributed ways across functions, but very few have a clear inventory of where it sits, what decisions it influences and whether it falls into a high-risk category,” he tells IO (formerly ISMS.online). “The starting point should be a structured review of AI use cases, ownership of the associated risks, and how those risks link back to existing obligations under frameworks like GDPR and NIS2.”

This is where compliance can get complicated, due to the overlapping obligations of each, says Bizzdesign chief strategy officer, Nick Reed.

“For example, an AI system processing personal data in a critical infrastructure context can trigger GDPR, NIS2, and AI Act obligations simultaneously. When organisations treat these as separate compliance tracks, they duplicate effort and may miss the commonalities that enable much greater efficiency is managing compliance,” he tells IO.

“Addressing this requires structural visibility across the enterprise. Organisations need a coherent view of how AI, business activities, data and critical systems intersect; not just within individual regulatory contexts but across all of them. Enterprise architecture provides that shared enterprise-wide semantic model, connecting AI systems to the capabilities, processes, data, third parties and obligations they touch in a way that enables coordinated governance possible rather than fragmented initiatives that duplicate effort.”

The next step for complying organisations is to understand their position in the AI supply chain.

“Many organisations assume they are just users of AI, but in practice they may be acting as integrators or distributors once they customise or embed models into their own services,” says PSE Consulting’s Jones. “That changes their obligations and their risk exposure, so mapping those roles early is essential.”

Bizzdesign’s Reed agrees, arguing that visibility once again becomes vital.

“To determine role and assess risk exposure, organisations need to see how AI systems connect to the broader enterprise: which business capabilities they support, which processes they enable, which data they access, and which vendors supply them,” he says.

“Without that connected view, role classification is at risk of being based on opinion rather than fact. The test comes when something changes. If a vendor tool is reclassified as high-risk or withdrawn from the market, can you answer immediately which processes depend on it, what data it touches, whether alternatives exist, and how quickly you could move?”

A Mature Approach with ISO 42001

In order to build the agility they need to stay compliant amid regulatory change, vendor reclassification and strategic pivots, organisations must adopt a structured and consistent approach to governance, Reed continues. This is where ISO 42001 can help.

“It formalises expectations around risk management, documentation, oversight, and continuous improvement across the AI lifecycle,” he says. “For organisations navigating the EU AI Act alongside GDPR and NIS2, frameworks like ISO 42001 help translate regulatory requirements into repeatable processes that compliance and risk teams can operationalise with confidence.”

Nik Kairinos, CEO and co-founder at RAIDS AI, shares more pragmatic reasons why ISO 42001 can help.

“The EU has developed a draft European standard specifically addressing the requirements of Article 17 of the act, which mandates quality management systems (QMS) for high-risk AI providers: prEN 18286 (Artificial Intelligence – Quality Management System for EU AI Act Regulatory Purposes),” he tells IO.

“Organisations with existing ISO 42001 certification have a significant head start with EU AI Act compliance, as it provides the operational foundation for prEN 18286. With the presumption of conformity, organisations implementing prEN 18286 can assume they meet Article 17 obligations – so it’s this that firms need to focus on.”

The goal shouldn’t be to start from scratch but rather extend existing relevant controls and assurance models into the AI space, says PSE Consulting’s Jones.

“The organisations that will adapt most successfully are those that treat trustworthy AI as an operational discipline rather than a compliance exercise,” he concludes. “If you know where your AI is, who owns it, and how it behaves when things go wrong, you are already most of the way towards meeting the intent of the regulation.”

With potential non-compliance fines reaching €15m (£13m) or 3% of global annual turnover, there’s no time to wait around.