What Is AI Vendor Risk?

AI vendor risk is the exposure your organisation carries when a third party supplies, hosts, trains, or embeds AI capability inside your products, processes, or decisions. It is broader than classic IT supplier risk because an AI vendor is not just processing your data — they can be shaping the outputs your employees and customers rely on, with behaviour that changes over time as models are retrained and updated.

In most organisations today, AI vendors fall into four overlapping categories:

- Foundation model providers such as OpenAI, Anthropic, Google, Mistral, and Cohere, accessed via API and embedded into internal tooling, agents, or customer facing features.

- AI native SaaS tools such as note takers, coding assistants, customer support copilots, sales enablement tools, and analytics platforms where AI is the product.

- Embedded AI in enterprise software such as AI summarisation in CRM, AI scoring in HR systems, AI review in contract management, or AI triage in service management, where the AI is a new feature in an existing vendor relationship.

- Data labelling and training data suppliers that sit upstream of any model you build, with significant influence over bias, quality, and lawful basis for training.

Each category carries different risks, but they share a common problem: the vendor controls behaviour you are accountable for. Regulators, customers, and insurers increasingly expect you to prove you have actively assessed and overseen that behaviour — not just signed a contract and moved on.

Why Has AI Vendor Risk Become a Boardroom Issue?

Three forces have pushed AI vendor oversight from a procurement task to a board level concern.

Concentrated dependency. A handful of foundation model providers now sit underneath thousands of AI features across almost every enterprise stack. Model outages, policy changes, pricing changes, and geographic restrictions propagate immediately into your products and customer journeys. That is a concentration risk the board has to own.

Non deterministic behaviour. Unlike a traditional SaaS vendor whose software does the same thing today as yesterday, an AI vendor can change model weights, system prompts, safety filters, and training data without notice. The output you validated in testing may not be the output your customer sees in production six months later.

Regulatory accountability. ISO 42001, the EU AI Act, sector regulators (FCA, PRA, NHS Digital, Ofcom), and data protection authorities all now place explicit obligations on the deployer of an AI system, not just the builder. Pointing at your vendor is no longer a defence. You have to demonstrate you picked a suitable vendor and kept watching them.

The practical consequence: AI vendor risk belongs on the enterprise risk register, in the audit committee pack, and in the AI policy, not buried in procurement.

What Does ISO 42001 Say About AI Supplier Oversight?

ISO 42001 addresses third party AI relationships directly in Annex A.10, one of nine Annex A control areas. The control area covers suppliers, the allocation of responsibilities between organisations, and customers, because in an AI supply chain you may be all three at once — provider to one party, deployer of another, and customer of a third.

Annex A.10 requires you to:

- Establish a process for identifying AI suppliers and the AI systems they provide to you

- Allocate responsibilities between you and your suppliers clearly and in writing, so there are no gaps in accountability for AI risk, impact, and performance

- Address AI specific considerations in supplier selection, contracting, and ongoing management, not just generic information security terms

- Ensure that customers of your AI systems have the information they need to use them responsibly

Annex B (which is normative, not informative) provides implementation guidance for each Annex A control, including A.10. Alongside Annex A.10, AI vendor oversight also touches A.2 (policies related to AI), A.3 (internal organisation and responsibilities), A.5 (assessing impacts of AI systems), A.7 (data for AI systems), and A.8 (information for interested parties). For the full set, see our Annex A controls reference and the dedicated Annex A.10 Third Party and Customer Relationships page.

Your Statement of Applicability should document how you apply each of these controls to your AI vendor estate, including any exclusions and the justification for them.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

How Do You Assess an AI Vendor?

AI vendor due diligence needs to go beyond a security questionnaire. You are assessing not only how the vendor protects your data, but how they build, operate, change, and retire the AI system you are depending on. The framework below covers the nine areas every AI vendor assessment should touch, with a sample of questions to ask and the red flags that should stop a contract.

| Area | Questions to ask | Red flag |

|---|---|---|

| Data training | What data was the model trained on? Was any of our data used for training by default? Can we opt out in writing? What is the lawful basis for training data (consent, legitimate interest, licensing)? | Vendor cannot tell you the training data sources, uses customer data for training by default with no opt out, or relies on scraped data with no lawful basis defence |

| Model transparency | Can you provide a model card or system card? What are the known limitations and failure modes? How is the model versioned? Will we be notified of model swaps or major retraining? | No model documentation, silent model swaps, no versioning, or refusal to describe the model at all (black box with no assurance) |

| Security posture | Is the vendor certified to ISO 27001 and SOC 2? How is customer data segregated? What are the encryption, key management, and access controls around inference traffic and logs? | No recognised certification, shared tenancy with no segregation, prompts and outputs logged in plaintext indefinitely, or no SSO and MFA |

| Privacy & GDPR | Where is data processed and stored? Is a Data Processing Agreement in place? Are there adequate transfer mechanisms for international flows? How are data subject rights handled for inputs and outputs? | No DPA, transfers outside the UK and EEA with no safeguards, no mechanism to support data subject access or erasure requests, or unclear retention of prompts |

| Certifications | Are you certified to ISO 42001? Do you hold ISO 27001 and SOC 2 Type II? When were the last surveillance audits? Can we see the certificates and scope statements? | No ISO 42001 (or no credible roadmap for it), expired certificates, scope statements that exclude the service you are buying, or self attestation only |

| Incident response | How do you define an AI incident? What is your notification SLA to customers? How do you handle model hallucinations, harmful outputs, bias incidents, and security breaches? Can we see a redacted post incident report? | No AI specific incident definition, notification windows longer than 72 hours, no post incident reporting, or inability to show any prior incident handled end to end |

| Change management | How do you manage changes to model weights, system prompts, safety filters, and guardrails? Do we get notice? Can we stay on a pinned version? How are changes tested before rollout? | Rolling updates with no notice, no version pinning option, no pre release testing on enterprise traffic, or no rollback mechanism |

| Subprocessors | Who are your subprocessors, including hosting, model hosting, evaluation, and data labelling? How are they approved and audited? Do we get notice of changes? | Incomplete subprocessor list, opaque use of sub sub processors, no right to object to new subprocessors, or use of subprocessors in adverse jurisdictions |

| Exit plan | How do we get our data back? How long is it retained after termination? Are prompts, outputs, and fine tuning data fully deleted? Is there a portability format for any custom configuration or evaluation data? | No defined exit, retention measured in years rather than days, no export format, or lock in via proprietary evaluation data |

Score each area, weight according to the AI use case risk, and link the result to the vendor record in your supplier register. The goal is a defensible decision, not a perfect score.

What Contract Terms Should You Require from AI Vendors?

AI vendor contracts need to go beyond standard SaaS terms. Security schedules and Data Processing Agreements handle the data layer, but AI behaviour, change, and accountability need their own clauses. The checklist below is the minimum we would expect in any material AI vendor contract:

- Right to audit. A contractual right to audit the vendor, or rely on independent assurance reports (ISO 42001 certificate, SOC 2 Type II, penetration test summaries) on a defined cadence, covering the specific AI system you are buying.

- Model change notice. Written notice of material changes to model weights, system prompts, safety filters, or guardrails, with a defined notice period (typically 30 to 90 days for enterprise use cases) and a version pinning option for regulated workloads.

- Data usage restrictions. Explicit prohibition on using customer inputs, outputs, fine tuning data, or metadata to train the vendor’s general models without written consent, with data segregation commitments.

- Output accuracy commitments. Representations about intended use, known limitations, and any accuracy or safety benchmarks the vendor publishes, with remedies if the vendor removes documented capabilities.

- Incident notification. A defined AI incident notification SLA (targeting 24 to 72 hours depending on severity) covering security breaches, bias incidents, harmful output events, and prolonged service degradation.

- Intellectual property. Clear allocation of IP in inputs, outputs, and any derived works, with indemnity against third party IP claims arising from model outputs.

- Indemnity and liability. Tailored indemnities for data protection breaches, IP infringement in model outputs, and regulatory fines where the vendor’s conduct is the proximate cause, with liability caps proportionate to the risk of the AI use case.

- Exit and data return. Defined exit process with guaranteed export formats, deletion certificates, and a maximum retention period for residual data post termination (typically 30 to 90 days).

- Subprocessor notice. A list of current subprocessors in the contract, prior written notice of changes, and a right to object on documented risk grounds.

- Certification maintenance. A commitment to maintain stated certifications (ISO 42001, ISO 27001, SOC 2) for the term of the contract, with notice if any certification lapses or scope changes.

These clauses should be risk tiered. A foundation model provider in a customer facing decision making pipeline warrants every clause; a low risk internal productivity tool can run on a lighter set with monitored renewals.

How Do You Monitor AI Vendors Post Contract?

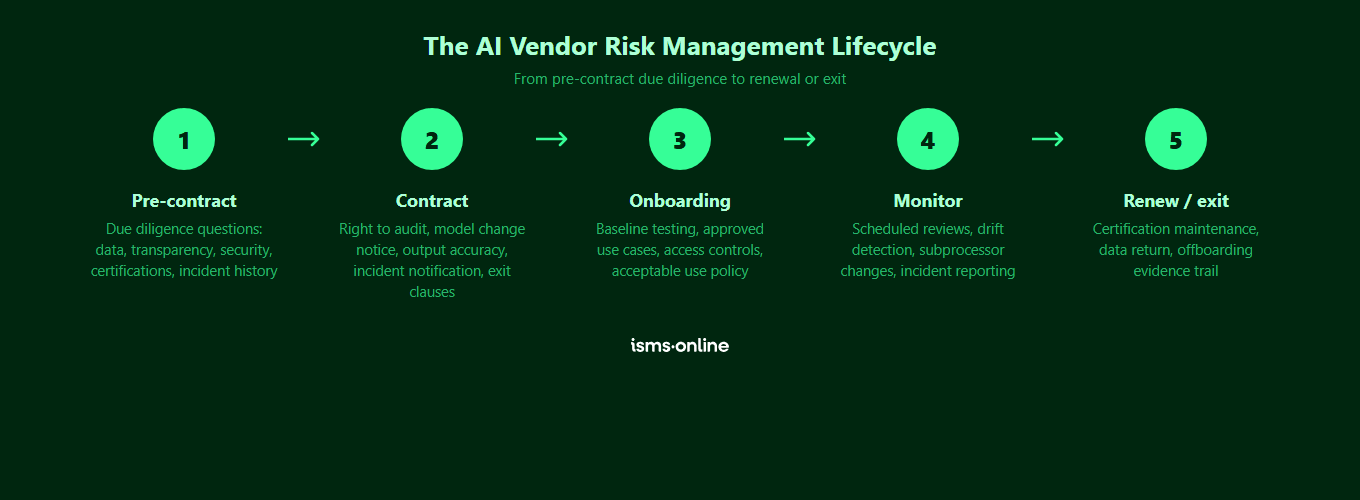

Due diligence at onboarding is necessary but not sufficient. AI vendors change, and so does your use of them. Ongoing monitoring needs to combine scheduled reassessments with event driven triggers.

Scheduled activities that should sit in your AI vendor oversight calendar:

- Annual re due diligence for high risk vendors, biennial for medium risk, covering the same nine areas as onboarding with a delta review

- Quarterly review of vendor model, subprocessor, and policy change logs against your pinned baseline

- Annual review of the vendor’s ISO 42001, ISO 27001, and SOC 2 certificates and scope statements

- Review of vendor published incident and transparency reports (where available) at each management review cycle

- Re review of the supplier section of your Statement of Applicability whenever supplier scope materially changes

Event driven triggers that should force an immediate reassessment:

- A vendor notifies you of a material model change, a change of subprocessor, or a policy change

- The vendor suffers a publicly disclosed security, safety, or bias incident

- The vendor loses, changes the scope of, or fails to renew a relied on certification

- You change the AI use case (for example, moving an internal tool into a customer facing workflow or a high risk context under the EU AI Act)

- A new regulation, sector guidance, or enforcement action materially changes your obligations as a deployer

Each monitoring activity should produce evidence linked to the vendor record, the relevant Annex A controls, and the AI risk register entries the vendor contributes to. This is how you turn vendor oversight from a procurement ritual into auditable governance.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How Does the EU AI Act Change AI Vendor Obligations?

The EU AI Act creates a structured set of obligations that flow down the AI supply chain. If you are a deployer of a high risk AI system, or a provider that embeds a third party model, your vendor choices become compliance choices.

Key downstream implications for AI vendor oversight:

- Provider obligations reach your vendors. General Purpose AI (GPAI) model providers have their own obligations around technical documentation, training data summaries, copyright policy, and (for systemic risk models) adversarial testing, incident reporting, and cybersecurity. You should be asking foundation model providers to evidence how they meet them.

- Deployer obligations reach you. If you use a high risk AI system, you have obligations for human oversight, log retention, monitoring, incident reporting, and (in many cases) a fundamental rights impact assessment. Meeting them requires information from the vendor, which has to be contracted for.

- Information flow down the chain. Providers must give deployers the technical documentation and instructions for use needed to meet their obligations. Your due diligence should verify this material actually exists for any high risk AI component you procure.

- Substantial modification risk. If you fine tune, wrap, or re purpose a general purpose model to the point of substantial modification, you may become a provider in your own right — with the attendant conformity assessment burden. Vendor contracts need to make clear what modifications are and are not permitted, and what assurances travel with them.

- Prohibited practices. Certain AI uses (social scoring, untargeted facial scraping, emotion inference in workplace and education, and others) are prohibited. Your AI policy and supplier assessment must screen use cases against these prohibitions before contracting, not after.

ISO 42001 and the EU AI Act are complementary. The standard gives you the management system scaffolding; the Act defines the legal obligations that scaffolding has to carry. A well run vendor oversight process serves both.

How ISMS.online Runs AI Vendor Risk Management

ISMS.online gives AI vendor oversight a structured home inside your wider AI management system. Rather than scatter vendor data across procurement sheets, security questionnaires, legal folders, and compliance trackers, the platform consolidates the lifecycle in one connected workspace:

- Supplier register with AI specific fields. Each AI vendor has a record with vendor type (foundation model, AI SaaS, embedded AI, data labelling), use cases, risk tier, certifications, subprocessors, and relevant Annex A control links.

- AI due diligence questionnaires. Pre built templates covering the nine assessment areas, with scoring, sign off, and stored evidence attached to the vendor record.

- Contract and clause tracking. Key AI clauses (audit right, model change notice, data use, incident SLA, exit) tracked as fields on the vendor record, so you can report on your AI estate at a glance.

- Ongoing monitoring workflows. Scheduled reassessments, certificate expiry alerts, change log reviews, and trigger based reassessments, all linked to the vendor record and to the AI risk register.

- Linked AI risk and impact assessments. Vendor entries connect directly to AI risk register entries (Clause 6.1.2) and AI system impact assessments (Clause 6.1.4), so vendor risk is treated as part of the overall AI risk picture, not a parallel universe.

- Audit evidence at control level. Every assessment, review, and reassessment produces evidence linked to the specific Annex A control it supports, ready for surveillance audits.

The practical outcome: when an auditor asks how you meet Annex A.10 for your AI supply chain, the answer is a single drill down — vendor, assessment, contract, monitoring history, evidence — not a week of screenshots and spreadsheets.

Why Choose ISMS.online for AI Vendor Risk?

ISMS.online is built for AI governance end to end, so vendor oversight is a first class part of the platform rather than a bolt on. Here is what you get:

- AI specific supplier register. Purpose built fields for AI vendor type, use cases, risk tier, certifications, subprocessors, and model versions, not a generic supplier list repurposed from an IT asset tool.

- Pre built AI due diligence templates. Questionnaires aligned to ISO 42001 Annex A.10, Annex B guidance, and EU AI Act downstream obligations, so teams start from a standards aligned baseline rather than writing questions from scratch.

- Integrated with the AI risk register. Vendor risk links directly to AI risks (Clause 6.1.2) and AI system impact assessments (Clause 6.1.4), with scoring, treatment, and review cycles in one place.

- Live Statement of Applicability. The supplier section of your SoA stays current as vendors, controls, and justifications change, not frozen in a Word document.

- Ongoing monitoring built in. Scheduled reassessments, certificate expiry alerts, and event triggered reviews keep vendor oversight active across the full contract lifecycle.

- Multi standard reuse. Vendor records shared across ISO 42001 and the supplier controls of your existing ISO management systems, so you run one supplier programme, not several. For the overlap see ISO 42001 vs ISO 27001.

- Assured Results Method. Proven implementation approach, adoption support, and live help so AI vendor oversight is up and running in weeks, not quarters.

Whether you are starting from zero or bringing an existing third party risk programme up to AI specific standards, ISMS.online gives you the tooling to run AI vendor risk management in line with ISO 42001 and the EU AI Act. For full implementation context, see our implementation guide, or head back to the ISO 42001 hub.

Ready to see the platform in action? Book a demo.

FAQs

What is AI vendor risk management?

AI vendor risk management is the process of identifying, assessing, contracting, and monitoring third parties that supply AI capability to your organisation. It covers foundation model providers, AI native SaaS tools, embedded AI features in enterprise software, and data labelling or training data suppliers. It extends classic third party risk with AI specific considerations such as training data, model transparency, non deterministic behaviour, and change management of model weights and system prompts.

Does ISO 42001 require AI vendor assessments?

Yes. Annex A.10 of ISO 42001 addresses third party and customer relationships directly, requiring organisations to identify AI suppliers, allocate responsibilities, and manage AI specific considerations across the supplier lifecycle. Annex B (normative) provides implementation guidance. Your ISMS.online Statement of Applicability should document how you apply these controls to your AI vendor estate, with any exclusions justified.

What are the biggest red flags in an AI vendor assessment?

The most common showstoppers are: training on customer data by default with no opt out, no model documentation or version control, no recognised certifications (ISO 42001, ISO 27001, SOC 2) or expired ones, no AI specific incident response or notification SLA, silent model changes with no notice, opaque subprocessor arrangements, and no defined exit process. Any one of these should trigger a risk decision at senior level before contract signature.

How often should we reassess AI vendors?

Annual reassessment is a reasonable baseline for high risk AI vendors, with biennial review for medium risk vendors and lighter touch monitoring for low risk ones. On top of the schedule, event driven triggers — material model or subprocessor change, publicly disclosed incident, certification loss, change of use case, new regulation — should force an immediate reassessment regardless of the calendar.

Does the EU AI Act affect our AI vendor contracts?

Yes. The EU AI Act places obligations on providers, deployers, and importers of AI systems, and information has to flow down the chain for those obligations to be met. Contracts with foundation model providers and downstream AI vendors should require the technical documentation, instructions for use, transparency information, and incident notification needed for you to meet your deployer or provider obligations. Fine tuning or substantially modifying a general purpose model can also turn a deployer into a provider, which has to be addressed contractually.

Is an ISO 27001 certified vendor enough for AI use cases?

No. ISO 27001 covers information security management and is a strong baseline, but it does not address AI specific risks such as training data provenance, model transparency, impact on affected individuals, bias, or model change management. For material AI use cases you should also look for ISO 42001 (or a credible roadmap toward it), SOC 2 Type II covering the AI service in scope, and explicit AI clauses in the contract that go beyond information security terms.

Can ISMS.online manage AI vendor risk alongside general supplier risk?

Yes. ISMS.online is a multi-standard platform, so AI vendors sit in the same supplier register as your wider third parties, with AI specific fields, due diligence templates, and monitoring workflows layered on top. That means one supplier programme, one set of evidence, and one audit trail — covering ISO 42001 Annex A.10 and the supplier controls of your existing management systems in a single view.