What Is an AI Audit?

An AI audit is a systematic, independent, and documented review of how an organisation governs, develops, deploys, and uses AI systems. In the context of ISO 42001, it is the mechanism by which you verify that your AI Management System (AIMS) conforms to the standard, to your own documented requirements, and that it is effectively implemented and maintained.

AI audits differ from traditional information security audits in three important ways. First, they cover AI-specific artefacts that do not exist in an ISO 27001 programme, such as AI system impact assessments, model cards, training data provenance records, and life cycle validation reports. Second, they assess ethical and societal considerations, including fairness, transparency, and accountability, not just confidentiality, integrity, and availability. Third, they follow the life cycle of individual AI systems from objective setting through to decommissioning, rather than auditing static controls in isolation.

An AI audit can be internal (first party), supplier facing (second party), or certification or surveillance (third party, carried out by an accredited body). This page focuses on the internal audit, because that is where most teams operate day to day and where a structured checklist adds the most value. For broader context on the audit cycle, see our guide to the ISO 42001 audit process.

Why Use an AI Audit Checklist?

Auditing an AI Management System without a checklist is how findings get missed, evidence gets forgotten, and management reviews turn into arguments over scope. A practical checklist gives you four things:

- Consistent scope. Every audit covers the same areas in the same order, so year on year comparison is meaningful and surveillance auditors see a mature programme.

- Defensible evidence. For each control area you know in advance what evidence to request, which means auditees can prepare and you spend time assessing rather than chasing.

- Reproducible test procedures. A walkthrough, an evidence inspection, and a sample test produce results another auditor can reproduce. That is what an external auditor expects to see.

- Clear pass or fail criteria. Without criteria, findings become opinions. A checklist turns each item into a yes or no question backed by evidence.

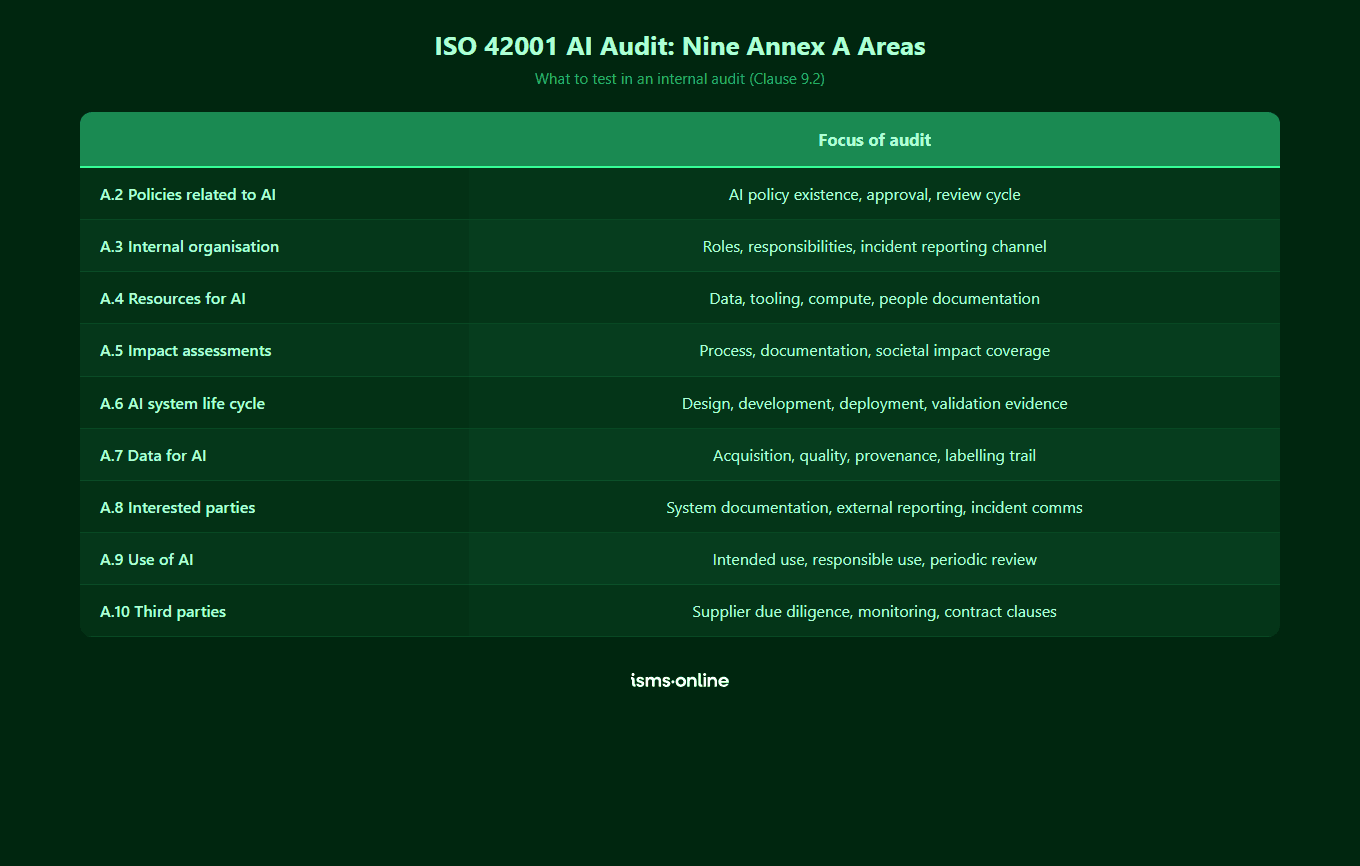

The checklist in this guide is scoped to the internal audit requirements in Clause 9.2 and walks through the nine Annex A controls areas (A.2 through A.10). It is designed to be printed, worked through, and attached to the audit report as evidence that the audit was planned and executed to a defined scope.

How Does ISO 42001 Define Internal Audit Requirements?

Clause 9.2 of ISO 42001 requires organisations to conduct internal audits at planned intervals to provide information on whether the AIMS conforms to both the organisation’s own requirements and the requirements of the standard, and whether it is effectively implemented and maintained. That wording matters. Auditors are not just checking that policies exist. They are checking that the management system works in practice.

The clause also requires you to:

- Plan, establish, implement, and maintain an audit programme, including frequency, methods, responsibilities, planning requirements, and reporting

- Define audit criteria and scope for each audit

- Select auditors and conduct audits to ensure objectivity and impartiality

- Report results of audits to relevant management

- Retain documented information as evidence of the audit programme and its results

The normative implementation guidance in Annex B guidance fleshes this out further. When you combine Clause 9.2 with the Annex A control areas, you get the structure of the checklist below.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

AI Audit Checklist: The 9 Areas to Cover

Annex A of ISO 42001 is organised into nine control areas. The table below summarises the checklist items, typical evidence, and test procedures for each. Use it as the backbone of your internal audit. For each row, the pass criterion is that the evidence is available, current, approved, and consistent with the intended control.

| Annex A area | Checklist items | Typical evidence | Test procedure |

|---|---|---|---|

| A.2 Policies related to AI | AI policy exists and is approved; aligned with organisational policies; reviewed at defined intervals; communicated to staff; covers objectives for responsible AI | Approved AI policy, review log, communication records, acknowledgement or attestation register | Walkthrough with policy owner, inspect approval and review history, sample attestations from a cross section of roles |

| A.3 Internal organisation | AI roles and responsibilities documented and assigned; reporting lines for AI concerns defined; governance forum meets and is minuted | Organisation chart, RACI for AI decisions, terms of reference for AI governance forum, meeting minutes, reporting of concerns log | Interview AI governance lead, sample meeting minutes over the last 12 months, trace a reported concern end to end |

| A.4 Resources for AI systems | Resources for AI systems are identified and documented (data, tooling, computing, human expertise); adequacy reviewed; documentation kept current | Resource register, competence records, tooling and compute inventory, resource adequacy review output | Sample one AI system, trace its resource documentation, verify competence records exist for key roles, confirm review evidence |

| A.5 Assessing impacts of AI systems | Impact assessment process defined; applied to every AI system in scope; covers individuals, groups, and society; documented and reviewed | AI system impact assessment register, completed assessments, review and approval records, evidence of action on material impacts | Sample two AI systems, inspect their completed impact assessments, confirm scope covers societal impact, trace any actions to closure |

| A.6 AI system life cycle | Objectives, design, development, verification, validation, deployment, operation, monitoring, and retirement controls in place; evidence captured at each stage | Life cycle documentation, design records, test plans, validation reports, deployment approvals, monitoring logs, retirement records | Pick one AI system and walk through the full life cycle, inspecting evidence at each stage; confirm validation was independent of development |

| A.7 Data for AI systems | Data acquisition, quality, preparation, and provenance controls defined and applied; data sources documented; quality measured; provenance retained | Data source inventory, quality criteria, data preparation records, provenance documentation, lawful basis records where relevant | Sample a training dataset for one in scope system, inspect provenance, verify quality checks were performed and documented |

| A.8 Information for interested parties | System documentation provided to users; external reporting mechanisms defined; incident communication plans in place | User facing documentation, release notes, external reporting records, incident communication templates and logs | Inspect user documentation for one deployed system, sample any incident communications, verify external reporting obligations are met |

| A.9 Use of AI systems | Processes for responsible use defined; intended use documented; objectives for use reviewed; out of scope use prevented or detected | Use case register, intended use statements, acceptable use policy, monitoring records, review outputs | Sample two use cases, compare actual usage against intended use, inspect monitoring and review evidence |

| A.10 Third party and customer relationships | Suppliers of AI systems and components assessed; responsibilities between parties allocated; customer obligations documented | Supplier register with AI specific due diligence, contracts, allocation of responsibilities matrix, customer facing obligations documentation | Sample two AI suppliers, inspect due diligence records and contracts, verify responsibilities are clearly allocated in writing |

A.2 Policies Related to AI

Start at the top of the house. Confirm that an AI policy exists, is approved at the right level, is reviewed on a defined cycle, and is communicated and acknowledged by staff in relevant roles. The policy should set direction for responsible AI and should be consistent with other organisational policies, particularly information security and data protection. Findings here often relate to reviews being overdue, acknowledgement records being incomplete, or the policy being silent on a key area such as third party AI tools.

A.3 Internal Organisation

Audit the governance structure. Who owns AI decisions? Where are AI concerns reported and how are they triaged? Is there a governance forum with a clear terms of reference and a quorum? Sample meeting minutes for substance, not just attendance. A common finding is a well populated RACI that is not reflected in actual decision making, which is straightforward to detect by tracing a real decision end to end.

A.4 Resources for AI Systems

Confirm that the resources needed to develop, operate, and govern AI systems are identified, documented, and adequate. Resources include data, tooling, computing infrastructure, and human expertise. The competence element is often weakest. Look for role specific competence criteria and evidence that people in those roles meet them, rather than generic training records.

A.5 Assessing Impacts of AI Systems

AI system impact assessments are one of the defining features of ISO 42001 and a regular source of findings. The assessment should cover impacts on individuals, groups, and society, and should be completed before deployment and reviewed on change. See our deeper guide on AI impact assessments for practical examples. Sample two in scope AI systems and trace the full impact assessment to approval and action closure.

A.6 AI System Life Cycle

This is the largest control area and rewards a life cycle walkthrough. Pick one production AI system and follow it from objective setting through design, development, verification, validation, deployment, operation, monitoring, and retirement. The critical test is whether validation was independent of development and whether monitoring has detected any drift, incidents, or out of scope use. The AI system life cycle guide sets out the full control list.

A.7 Data for AI Systems

Data is where AI systems succeed or fail. Audit acquisition, quality, preparation, and provenance controls. Sample a training dataset and verify that its sources, licences, quality checks, and lawful basis (where personal data is involved) are documented. Expect to find issues around provenance for older systems and around data quality measurement being informal rather than recorded.

A.8 Information for Interested Parties

Confirm that users, customers, regulators, and other interested parties receive the information they need. This includes system documentation at deployment, incident communications, and any external reporting obligations. Sample a recent incident or material change and inspect the communications that followed.

A.9 Use of AI Systems

The intended use of every AI system should be documented, and actual use should be monitored against it. Organisations that only develop AI, and organisations that only deploy third party AI, both need this control area. Sample two use cases, compare actual to intended use, and inspect the monitoring that detects divergence.

A.10 Third Party and Customer Relationships

Audit how AI suppliers are assessed, how responsibilities are allocated between you and them, and how customer obligations are documented. For foundation model providers and AI API vendors specifically, verify that due diligence captured model provenance, evaluation data, and incident notification commitments. Findings here often relate to contracts that predate the AI programme and have not been updated to reflect allocated responsibilities.

What Evidence Should You Collect During an AI Audit?

Evidence is what turns an opinion into a finding. For every checklist item, collect at least one of the following and attach it to the audit working papers:

- Documentary evidence. Policies, procedures, registers, assessment records, meeting minutes, approval records, and training records.

- System generated evidence. Access logs, monitoring outputs, model performance dashboards, drift detection alerts, and incident tickets.

- Interview notes. Walkthroughs with control owners, governance forum members, data scientists, and product managers, with the date, attendees, and key points recorded.

- Observation evidence. Screenshots, screen recordings, or annotated extracts showing the control in operation, such as an approval workflow rejecting an unapproved change.

- Sample test results. Where you have tested a sample (for example, ten AI system impact assessments out of fifteen), record the sample size, selection method, and pass or fail outcome per item.

Link every piece of evidence back to the control it supports and to the audit finding (or lack of finding) it drives. This traceability is exactly what an external auditor will want to see in your records and is one of the most common things to get wrong on paper based audits. For the full picture of what the standard requires in writing, see our guide to documentation required under ISO 42001.

Start your free trial

Want to explore?

Sign up for your free trial today and get hands on with all the compliance features that ISMS.online has to offer

How Do You Document Findings and Corrective Actions?

A finding is a documented statement of fact, supported by evidence, evaluated against a criterion. Good findings have four parts: condition (what was observed), criterion (what was required, for example the clause or control), cause (why it happened), and consequence (what it means for the AIMS). Findings are then classified, typically as:

- Major nonconformity. A total breakdown of a required control, or multiple related minor nonconformities pointing to a systemic issue. These will block certification if found by an external auditor.

- Minor nonconformity. A single lapse in an otherwise working control. Must be addressed but rarely blocks certification on its own.

- Observation or opportunity for improvement. Not a breach of requirements, but something worth addressing before it becomes a finding.

Every nonconformity should trigger a corrective action that follows Clause 10. That means correcting the nonconformity, determining the cause, determining whether similar issues exist elsewhere, implementing the action, verifying effectiveness, and retaining documented information. Corrective action records should link back to the audit finding and forward to any updates to risks, controls, policies, or the Statement of Applicability.

A useful findings template has columns for: finding ID, audit reference, Annex A control or clause, condition, criterion, cause, consequence, classification, owner, due date, corrective action, verification of effectiveness, and closure date. Drop this into your working papers or, better, into a platform that does the tracking for you.

How ISMS.online Simplifies AI Audits

ISMS.online gives you the audit checklist, evidence library, findings tracker, and corrective action workflow in one place, pre-mapped to ISO 42001.

- Pre-built audit programme. Internal audit plans aligned to Clause 9.2 with audit schedules, scope definition, and auditor assignment built in.

- Checklist templates per Annex A area. Every checklist item is already mapped to the relevant control, so auditors spend time assessing rather than writing templates.

- Linked evidence at control level. Evidence captured against Annex A controls is visible directly from the audit checklist, removing the scavenger hunt.

- Findings and corrective actions in one workflow. Nonconformities raised during the audit automatically create corrective actions with owners, due dates, verification steps, and closure tracking per Clause 10.

- Management review inputs generated automatically. Audit results, nonconformities, and actions flow straight into the Clause 9.3 management review pack.

- Multi-standard reuse. Evidence collected for ISO 27001 internal audits is reusable against the relevant ISO 42001 controls, because it all lives in the same platform.

The result is that an audit that would have taken two weeks of spreadsheet wrangling and email chasing runs as a managed workflow inside a single tool, with the evidence trail ready for your external auditor.

Why Choose ISMS.online for AI Audit Management?

ISMS.online is built from the ground up for ISO 42001, including the full internal audit cycle. Here is what you get when you run AI audits on the platform:

- A ready to use AI audit checklist. Pre-mapped to Clause 9.2 and every Annex A control area, so your first audit does not start with a blank template.

- Integrated evidence library. Policies, risks, impact assessments, and control evidence are linked to the controls they support, so auditors open an item and see the proof.

- Findings and actions in one place. Nonconformities raised during the audit create corrective actions with owners, due dates, and verification of effectiveness checks, aligned to Clause 10.

- Management review ready. Audit outputs, nonconformities, and corrective actions feed directly into the Clause 9.3 management review pack, removing the end of year data gathering exercise.

- Shared with ISO 27001. A single audit programme covering ISO 42001 and ISO 27001, using one set of evidence, one risk register, and one Statement of Applicability builder.

- Assured Results Method. Proven implementation and audit approach that has helped hundreds of organisations achieve certification first time, with live human support throughout.

Whether you are running your first internal audit, preparing for a Stage 2 certification audit, or managing annual surveillance, ISMS.online keeps the programme structured and the evidence defensible. For a broader readiness check, run a gap analysis first, or work through our full ISO 42001 compliance checklist.

Ready to see the platform in action? Book a demo to see how ISMS.online can power your AI audit programme.

FAQs

What is an AI audit checklist?

An AI audit checklist is a structured list of items that an internal auditor works through to assess whether an organisation’s AI Management System conforms to ISO 42001 and is effectively implemented. A good checklist covers Clause 9.2 audit requirements and all nine Annex A control areas (A.2 through A.10), with evidence expectations and test procedures defined for each item so findings are consistent and defensible.

How often should I run an AI internal audit?

ISO 42001 Clause 9.2 requires internal audits at planned intervals, without prescribing a specific frequency. In practice, most organisations run a full AIMS audit annually, with additional focused audits on higher risk AI systems or control areas at least every six months. Audit frequency should be risk based, so a high impact generative AI system in production warrants more frequent review than an internal productivity tool.

Who can conduct an ISO 42001 internal audit?

Internal auditors must be objective and impartial, which means they cannot audit work they are responsible for. Many organisations use a combination of trained internal staff from outside the AI function, a central assurance team, or an independent third party acting as a first party auditor on their behalf. What matters is that the auditor is competent to assess AI specific controls and is free from conflicts of interest relative to the areas being audited.

What is the difference between an AI audit and an AI impact assessment?

An AI system impact assessment (Annex A.5) evaluates the potential impacts of an AI system on individuals, groups, and society before deployment and on change. An AI audit (Clause 9.2) evaluates whether the management system that governs AI, including the impact assessment process itself, conforms to requirements and works in practice. An audit will typically sample impact assessments as evidence, but it also covers policies, life cycle controls, data governance, suppliers, and every other area of the AIMS.

Does the internal audit need to cover every AI system every time?

No. The audit programme should ensure that over a defined cycle (typically one to three years) every part of the AIMS and every in scope AI system is audited, but individual audits can be scoped to a subset. For most organisations, the annual audit samples AI systems based on risk and materiality, so the highest impact systems are audited most often and lower risk ones are covered on rotation. The audit programme itself is the documented evidence of that plan.

How do AI audit findings link to corrective actions?

Every nonconformity raised during an audit should trigger a Clause 10 corrective action. That means correcting the issue, determining the root cause, considering whether the same issue exists elsewhere, implementing the action, verifying effectiveness, and retaining documented information. In ISMS.online, findings raised during the audit automatically create corrective actions with owners and due dates, and verification of effectiveness is tracked through to closure so nothing drifts.

Can the same audit cover ISO 42001 and ISO 27001?

Yes. Both standards follow the Annex SL high level structure and share clauses around leadership, planning, support, operation, evaluation, and improvement. Annex D of ISO 42001 provides explicit mapping to ISO 27001. A combined audit programme is more efficient, avoids duplicated evidence gathering, and is supported by multi standard platforms such as ISMS.online which use a single risk register, evidence library, and audit workflow across both standards.