What is AI Governance?

AI governance is the system of policies, people, processes and controls that an organisation uses to make sure its artificial intelligence is developed, deployed and used in a responsible, safe and accountable way. Think of it as the operating system for responsible AI: the structure that sits around every model, dataset, pipeline and use case, so that AI outcomes align with the organisation’s values, legal obligations and risk appetite.

At a practical level, AI governance answers questions like:

- Who is accountable for each AI system in production, and who signs off material changes?

- What policies and standards apply when we build, buy or fine tune an AI model?

- How do we assess and treat risks that are specific to AI, such as bias, hallucination, model drift and unsafe behaviour?

- How do we document the intended use, limitations and impact of each AI system?

- How do we prove to regulators, auditors and customers that our AI is trustworthy?

Information security governance answers the same questions for data. Privacy governance answers them for personal information. AI governance does the same job for AI systems, and because AI introduces new risks (opacity, autonomy, probabilistic outputs, rapid change), it needs its own dedicated structure. That is exactly what ISO 42001 provides as the first international AI management system standard.

Why Does AI Governance Matter Now?

AI governance was a niche topic five years ago. Today it is a board level concern. Four forces are driving the shift:

- Regulation has arrived. The EU AI Act is now in force, with tiered obligations based on system risk. The UK, US, Canada, Singapore and others are developing their own regimes. Sector regulators (financial services, healthcare, employment) are adding AI specific expectations on top.

- Customer procurement has caught up. Enterprise buyers ask for AI governance evidence in RFPs and security questionnaires. “Do you have an AI policy, AI risk assessment and an accountable owner?” is the new “Are you ISO 27001 certified?”.

- The risk profile has grown. Model failures now cause real commercial, legal and reputational damage. Bias in automated decisions, leakage through generative tools, and unsafe agent behaviour all sit on the board agenda.

- Trust is a competitive asset. The organisations that can explain how their AI works, where it is used and how it is controlled win more deals and face fewer objections. Those that cannot, stall.

AI governance turns these pressures into a structured programme rather than a reactive scramble. Done well, it is not a brake on AI adoption. It is the seatbelt that lets you go faster with confidence.

What Are the Core Principles of AI Governance?

Every credible framework converges on a similar set of principles. They originate from the OECD AI Principles (2019) and are reflected throughout ISO 42001, the NIST AI Risk Management Framework and the EU AI Act. Eight principles form the common core:

- Accountability. A named human is responsible for each AI system and for the outcomes it produces. Accountability cannot be delegated to the model itself.

- Transparency. People affected by AI outcomes should understand when AI is being used, what it is doing, and its limitations. This extends to model documentation, intended use, data sources and known risks.

- Fairness. AI systems should not produce unjustified discriminatory outcomes. Bias must be actively assessed, measured and mitigated across the life cycle.

- Safety. AI systems should operate reliably and not cause harm. This includes robustness to unexpected inputs, secure failure modes and continuous monitoring.

- Privacy. Personal data used by AI must be protected in line with data protection law. Training data, prompts and outputs are all in scope.

- Human oversight. Humans must be able to intervene, override or disable AI systems, especially where decisions materially affect individuals.

- Inclusivity. AI systems should serve a diverse user base and be designed with accessibility and representation in mind.

- Robustness. AI systems should be resilient to error, attack and drift, with ongoing validation and performance monitoring.

These are not aspirational bullet points. Each principle maps to concrete requirements in the major frameworks, and each becomes an auditable control once you operationalise it.

What Does AI Governance Actually Cover?

AI governance is broader than model risk management or ML ops. It covers the full life cycle of an AI system, from the decision to build or buy through to retirement. A mature programme addresses seven layers:

- Strategy and policy. An AI strategy, an AI policy, acceptable use guidelines, and supporting topic policies (data, security, privacy, ethics).

- Roles and accountability. Clear ownership at board, executive, product and engineering level, with a named AI governance lead and an AI ethics or review board for material decisions.

- Risk and impact assessment. A process for identifying AI specific risks (bias, hallucination, misuse, drift) and for assessing the impact of each AI system on individuals, groups and society.

- Life cycle controls. Requirements and guardrails at every stage: objective setting, data sourcing, design, development, validation, deployment, operation, monitoring, change management and retirement.

- Documentation and transparency. Model cards, system cards, data sheets, intended use statements, user notices and records of decisions.

- Third party management. Controls for AI vendors, foundation models and hosted services, including contract terms, due diligence and ongoing assurance.

- Assurance and audit. Internal and external review, monitoring, metrics, management review and continual improvement.

Most organisations already have fragments of this in their security, privacy or risk programmes. AI governance brings them together into a coherent, auditable system. That is exactly the job the AI Management System (AIMS) in ISO 42001 is designed to do.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

Which Frameworks Support AI Governance?

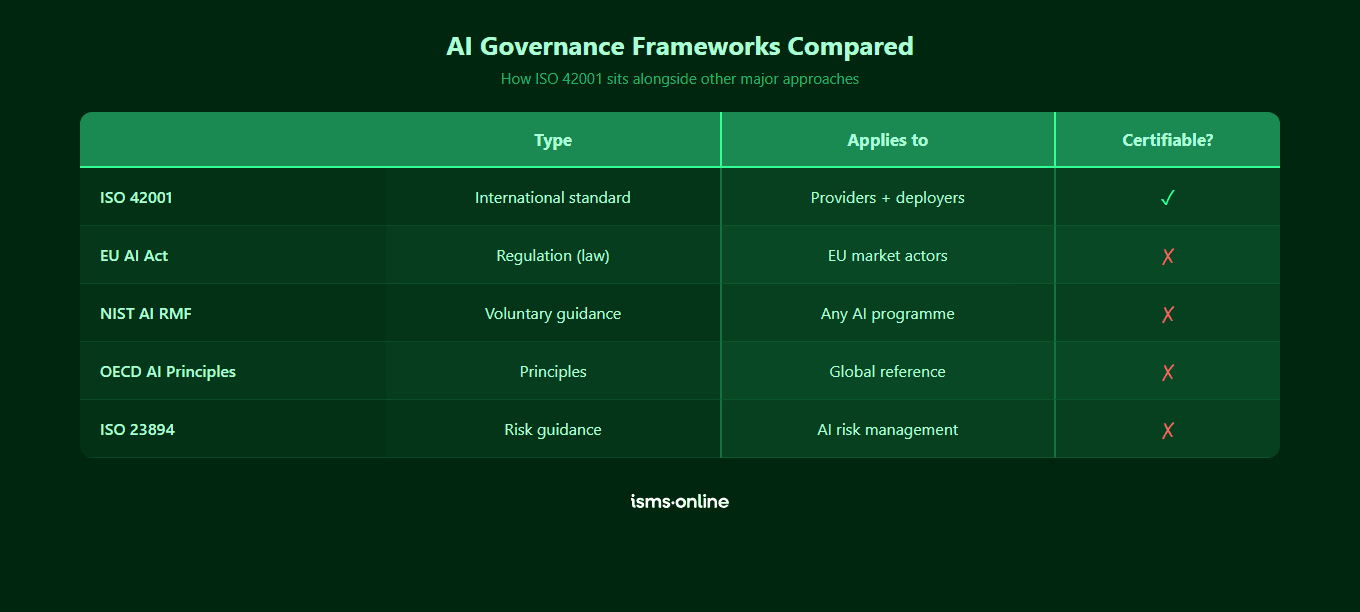

There is no single “AI governance standard” to rule them all. Most mature programmes use a small stack of complementary frameworks: one for the management system, one for risk, one for regulation, and international principles as the overlay. The five you will encounter most often are:

| Framework | Type | Applies to | Certifiable? | Primary use case |

|---|---|---|---|---|

| ISO/IEC 42001 | International management system standard | Any organisation that develops, provides or uses AI | Yes, by accredited certification bodies | The operating framework for an AI management system, certifiable and auditable |

| NIST AI RMF | Voluntary US risk management framework | Any organisation, widely used in the US | No | Structured approach to AI risk across Govern, Map, Measure, Manage functions |

| EU AI Act | Regulation (legally binding in the EU) | Providers and deployers of AI systems placed or used in the EU | Conformity assessment, not certification | Mandatory compliance obligations tiered by AI system risk level |

| OECD AI Principles | International policy principles | Governments and organisations worldwide | No | High level principles that underpin most national regimes and standards |

| ISO/IEC 23894 | International guidance standard | Any organisation running AI risk management | No (guidance, not requirements) | Detailed AI risk management guidance, often used alongside ISO 42001 |

The practical pattern most organisations adopt is straightforward. ISO 42001 is the backbone, because it provides a certifiable management system with Annex A controls, normative implementation guidance and explicit mapping to other standards. NIST AI RMF plugs in as a detailed risk taxonomy, especially for US operations. The EU AI Act sits on top as binding regulation for anything placed on or used in the EU market. OECD principles are the ethical overlay. ISO 23894 deepens the risk management layer.

For a side by side view of how the two highest profile frameworks interact, see ISO 42001 vs EU AI Act. For a practical roadmap, the implementation guide walks through every clause. And for organisations that have started to build AI governance informally, closing the AI governance gap shows how to consolidate it onto a recognised standard.

Who is Responsible for AI Governance in an Organisation?

AI governance is a team sport. It does not belong to one function, and concentrating it in just one (usually IT or compliance) is a common failure mode. A mature governance structure assigns clear roles at four levels:

- Board and executive leadership. Owns AI strategy, risk appetite and ultimate accountability. Approves the AI policy and receives regular reporting on AI risk, performance and incidents. In many organisations, this is now supported by a Chief AI Officer or a designated executive sponsor.

- AI governance lead or AI ethics committee. A dedicated person or cross-functional group (legal, security, privacy, risk, product, engineering, HR) that reviews material AI use cases, approves high risk systems and maintains the governance framework day to day.

- Risk, security, privacy and legal functions. Own the specialist components: AI risk assessment, model security, data protection impact assessments, contractual controls and regulatory interpretation. These roles typically run AI risk and impact assessments under Clause 6 of ISO 42001.

- Product and engineering teams. Build, deploy and operate AI systems within the approved guardrails. Responsible for model documentation, validation, monitoring and incident response at the system level.

The golden rule: every AI system in production should have a named human owner who can answer three questions without hesitation. What is this system used for? What are its known risks and limitations? Who authorised it to go live? If any answer is unclear, your governance structure has a gap.

What Are the Maturity Levels of AI Governance?

AI governance does not appear fully formed. Most organisations pass through four levels:

- Ad hoc. No AI policy, no central inventory, individual teams using AI without oversight. Risk is invisible and unmeasured.

- Reactive. Draft AI policy, basic acceptable use guidance, some awareness of regulatory exposure. Governance kicks in after an incident rather than before one.

- Structured. Documented AI policy, AI use case inventory, risk and impact assessment process, named governance lead, initial controls in place. Often mapped loosely to a framework like NIST AI RMF.

- Managed and certifiable. Full AI Management System aligned to ISO 42001, with all 38 Annex A controls addressed via the Statement of Applicability, integrated with wider ISMS and privacy programmes, internal audit cycle running, management review in place. Ready for third party certification.

The gap between level 2 and level 4 is where most of the work lives. It is also where most of the commercial value sits, because level 4 is the level that reassures regulators, customers and insurers.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How Does ISMS.online Operationalise AI Governance?

Principles and frameworks are the easy part. Operating AI governance week after week, with evidence that stands up to an auditor, is where most programmes break down. ISMS.online turns the ISO 42001 standard into a working management system so that AI governance is something you run, not something you talk about.

The platform operationalises AI governance across five axes:

- Structured AI Management System. A pre-built AIMS aligned to all 10 clauses of ISO 42001, so context, leadership, planning, support, operation, performance evaluation and improvement each have a dedicated home with working templates.

- AI specific risk and impact tooling. Dedicated registers for AI risk (Clause 6.1.2) and AI system impact (Clause 6.1.4), with scoring, treatment, owner assignment, review cycles and automatic links to the controls and evidence that address each finding.

- Policy library with attestations. Pre-drafted AI policies aligned to Clause 5.2 and Annex A.2, sitting in Policy Packs with version control, approval workflows and user attestations so your AI policy is active, not dormant.

- Control library mapped to Annex A. All 38 Annex A controls across 9 control areas are present out of the box, with implementation guidance and evidence attachment, feeding a live Statement of Applicability.

- Integrated assurance. Audit Management for internal audits (Clause 9.2), management review (Clause 9.3) and corrective actions (Clause 10), all linked to the risks, controls, policies and evidence they touch.

Because the platform is multi-standard, your AI governance programme sits alongside existing ISO 27001, GDPR and other work. Shared risks, shared evidence, shared audit programme. You build AI governance on top of what you already have, rather than standing up a second compliance function.

Why Choose ISMS.online for AI Governance?

ISMS.online is built specifically to operationalise AI governance through ISO 42001, not retrofitted onto an information security product. Here is what you get:

- A ready to run AIMS. Pre-configured management system covering all 10 clauses of ISO 42001 and all 38 Annex A controls, so your team tailors rather than designs from zero.

- AI native risk and impact assessments. Dedicated registers for AI risk and AI system impact, with scoring, treatment, review cycles and traceable links to every control and piece of evidence.

- Policy templates that reflect the principles. Pre-drafted AI policies covering accountability, transparency, fairness, safety, privacy, human oversight, inclusivity and robustness, with approval workflows and attestations.

- Live Statement of Applicability. Every Annex A control justified, mapped to controls, evidence and owners, always current rather than a static document.

- Audit ready by default. Internal audit programmes, management review inputs, corrective actions and evidence all linked and versioned, so certification audits are predictable rather than painful.

- Assured Results Method. Proven implementation approach backed by onboarding, training, and live human support, that has helped hundreds of organisations achieve certification first time across ISO 27001, ISO 42001 and other standards.

Whether you are writing your first AI policy, running a gap analysis, or preparing for third party certification, ISMS.online gives you the platform to turn AI governance from a slide deck into an operating system. For the full context on what the standard requires, read our implementation guide or the piece on closing the AI governance gap.

Ready to see the platform in action? Book a demo.

FAQs

What is AI governance in simple terms?

AI governance is the set of policies, people, processes and controls that make sure your organisation’s AI is developed, deployed and used in a safe, fair, accountable and legally compliant way. It is to AI what information security governance is to data, or what privacy governance is to personal information — a dedicated operating model for the specific risks and obligations AI introduces.

What are the main principles of AI governance?

Most credible frameworks agree on eight core principles: accountability, transparency, fairness, safety, privacy, human oversight, inclusivity and robustness. These originate in the OECD AI Principles and are reflected throughout ISO 42001, the NIST AI Risk Management Framework and the EU AI Act. Each principle translates into concrete, auditable controls when you operationalise it in an AI management system.

Is AI governance the same as AI ethics?

They are related but not the same. AI ethics is the set of values and principles that describe what responsible AI should look like. AI governance is the operating system that turns those principles into policies, processes, controls and evidence. Ethics answers “what should we do?”. Governance answers “how do we make sure we actually do it, and how do we prove it?”.

Which AI governance framework should we adopt?

For most organisations the sensible stack is: ISO 42001 as the certifiable management system backbone, NIST AI RMF as a detailed risk taxonomy, the EU AI Act as binding regulation where applicable, and OECD AI Principles as the ethical overlay. ISO 42001 is usually the primary choice because it is international, certifiable and explicitly mapped to other standards such as ISO 27001.

Who should own AI governance in the organisation?

Ultimate accountability sits with the board and executive leadership. Day to day ownership usually rests with a named AI governance lead, often supported by an AI ethics or review committee drawn from legal, risk, security, privacy, product and engineering. Each AI system in production should also have a named system owner who can explain its purpose, risks and approval status. Concentrating governance in a single function (such as IT) is a common failure mode.

Does AI governance apply if we only use AI rather than build it?

Yes. AI governance applies to organisations that develop, provide or use AI systems. If you deploy third party AI tools in business critical processes (for example, copilots that handle customer data, or AI agents that make automated decisions), you still need an AI policy, a use case inventory, risk and impact assessments, supplier due diligence and monitoring. ISO 42001 explicitly covers organisations that use AI, not just those that build it.

How does AI governance relate to ISO 27001 and GDPR?

AI governance sits alongside information security and privacy governance rather than replacing them. ISO 27001 protects information assets, GDPR protects personal data, and ISO 42001 governs AI systems. The three are complementary and overlap heavily: ISO 42001 follows the Annex SL management system structure shared by ISO 27001, and Annex D of ISO 42001 provides explicit mapping to ISO 27001 controls. Running them on a single platform such as ISMS.online avoids duplicating risks, evidence and audits.