What is Responsible AI?

Responsible AI is the practice of designing, building, deploying, and using AI systems in a way that is safe, fair, transparent, accountable, and respectful of human rights. It is the bridge between high level AI ethics statements and the day to day decisions engineers, data scientists, product owners, and executives make when they put an AI system into production.

The phrase covers two distinct things that are often blurred together. The first is a set of principles — what good looks like when an AI system interacts with people and data. The second is the operating model that makes those principles stick, including roles, policies, controls, risk assessments, and evidence. Without the operating model, responsible AI is an aspiration on a slide. With it, responsible AI becomes something you can audit.

Organisations that get this right treat responsible AI as a management discipline, not a communications exercise. That is exactly the shift that ISO 42001 was created to support.

What is Responsible AI Governance?

Responsible AI governance is the system of accountability, oversight, policies, processes, and controls your organisation uses to ensure AI is developed and used responsibly. It is the governance layer that turns principles into practice.

A good responsible AI governance programme answers a short list of hard questions:

- Who is accountable for each AI system in production, from data through to outcomes?

- How are risks assessed before an AI system is built and before it goes live?

- What controls are in place to address bias, safety, privacy, and misuse?

- How is human oversight designed into high risk decisions?

- How is evidence captured so you can demonstrate responsible use to regulators, customers, and boards?

- How is the programme improved based on incidents, audits, and performance data?

In structural terms, responsible AI governance looks a lot like any other management system. It needs leadership commitment, risk based planning, operational controls, performance evaluation, and continual improvement. That is why a formal AI Management System (AIMS) is the most effective vehicle for operationalising responsible AI.

What are the Principles of Responsible AI?

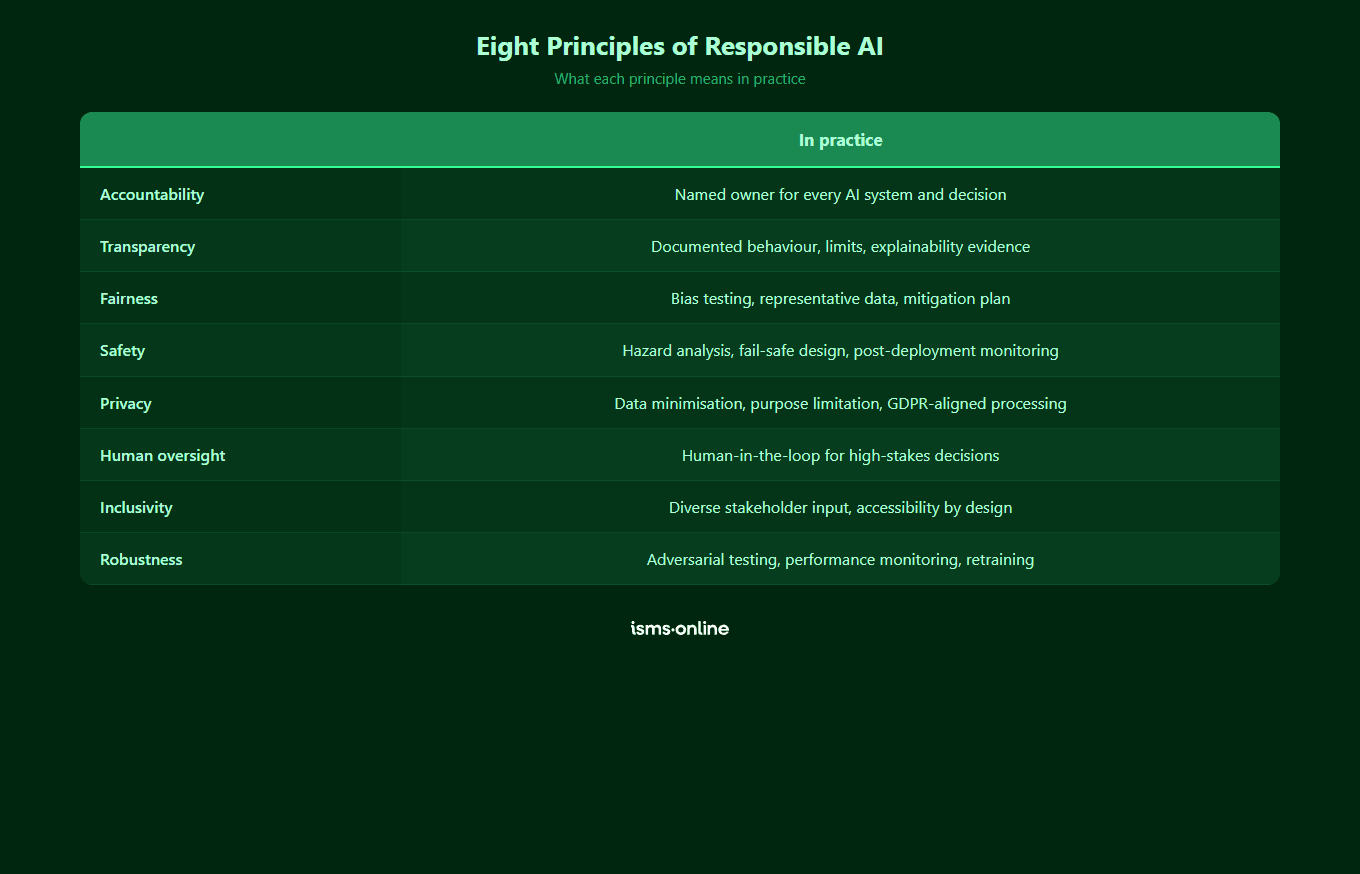

There is no single universal list, but the principles promoted by the OECD, the EU, NIST, UNESCO, and ISO converge on the same core ideas. The eight principles below cover what most regulators and customers expect you to demonstrate.

- Fairness. AI systems should not create or amplify unjust bias against individuals or groups. This means testing for disparate impact, documenting training data, and remediating issues before and after deployment.

- Accountability. Every AI system has a named owner, an accountable executive, and a clear chain of responsibility covering data, model, deployment, and outcomes.

- Transparency. Stakeholders understand how an AI system works, what data it uses, what decisions it makes, and what its limitations are. This is supported by documentation like model cards, system cards, and user facing disclosures.

- Safety. AI systems are designed to avoid foreseeable harm to people, property, and the environment, with safeguards proportionate to the risk level of the use case.

- Privacy. Personal data used to train and run AI systems is protected, minimised, and processed on a lawful basis, with appropriate technical and organisational measures.

- Human oversight. High risk or consequential decisions have meaningful human review, with the authority and information to override the AI system.

- Inclusivity. AI systems are designed with, and tested against, the needs of the diverse populations they serve, including people with disabilities and under represented groups.

- Robustness. AI systems perform reliably across the range of conditions they will encounter, resist adversarial manipulation, and degrade gracefully when inputs fall outside expected ranges.

These principles are not a menu to pick from. Responsible AI governance requires you to cover all of them, then prioritise investment based on the risk profile of each AI system in scope.

How Do Responsible AI Principles Translate Into Real Controls?

Principles only matter if they are implemented as controls that can be tested and evidenced. The table below maps each principle to what it means in practice, the relevant ISO 42001 clause or Annex A controls, and an example artefact auditors will expect to see.

| Principle | What it means in practice | ISO 42001 clause or Annex A control | Example artefact |

|---|---|---|---|

| Fairness | Test for and remediate bias across training data and model outputs | A.7 Data for AI systems, A.6.2 AI system life cycle | Bias assessment report with remediation actions and sign off |

| Accountability | Named owners, executive sponsor, and documented responsibilities per AI system | Clause 5 Leadership, A.3 Internal organisation | AI system register with owners, RACI matrix, board level reporting |

| Transparency | System documentation, model cards, external disclosures, explanation of outputs | A.8 Information for interested parties, A.6 AI system life cycle | Published model card, user facing disclosure, documented intended use |

| Safety | Risk proportionate safeguards, red teaming, pre deployment testing | Clause 6.1.2 AI risk, A.6.2 AI system life cycle | AI risk register entry with treatments, test results, sign off |

| Privacy | Lawful basis, data minimisation, access controls, data protection measures | A.7 Data for AI systems, A.4 Resources for AI systems | Data protection impact assessment, data processing records |

| Human oversight | Defined human in the loop, escalation, and override for consequential decisions | A.9 Use of AI systems, A.6.2 AI system life cycle | Documented oversight model, escalation procedure, audit log of overrides |

| Inclusivity | Diverse design input, accessibility testing, under represented group evaluation | A.5 Assessing impacts of AI systems, A.6 AI system life cycle | Impact assessment covering affected groups, accessibility test results |

| Robustness | Performance testing across conditions, adversarial resilience, graceful failure | A.6.2 AI system life cycle, Clause 9.1 Monitoring | Validation and verification report, monitoring dashboard, incident log |

Every row is an audit conversation waiting to happen. If you cannot produce the example artefact on demand, the control is not operating, regardless of what your policy says.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

Which Frameworks Support Responsible AI?

You do not need to invent responsible AI governance from first principles. A small number of frameworks already encode the consensus view, and most organisations will touch several of them at once.

ISO/IEC 42001

ISO 42001 is the first certifiable international standard for AI management systems. It covers 10 clauses, 38 Annex A controls across 9 control areas, and normative implementation guidance in Annex B. It is designed to be integrated with other management system standards like ISO 27001 and ISO 9001, and it provides the auditable backbone most responsible AI programmes need. Our closing the AI governance gap guide shows how the standard addresses the practical shortfalls teams run into.

NIST AI Risk Management Framework

The NIST AI RMF is a voluntary framework from the US National Institute of Standards and Technology. It defines four core functions (Govern, Map, Measure, Manage) and a set of characteristics of trustworthy AI. It is particularly useful as an organising model for AI risk and pairs well with ISO 42001, which gives you the management system and controls to implement the RMF functions.

OECD AI Principles

The OECD AI Principles were adopted in 2019 and updated in 2024. They are the policy reference most governments use, covering inclusive growth, human rights, transparency, robustness, and accountability. They are principles rather than a framework, so you use them to benchmark intent rather than to build an operating model.

EU AI Act

The EU AI Act is the first comprehensive AI regulation. It is risk based, classifying AI systems as unacceptable, high, limited, or minimal risk, with the strictest obligations falling on high risk systems. The EU AI Act is not a framework for responsible AI governance in itself, but it creates legal obligations that a well designed responsible AI programme, built around ISO 42001, is well placed to meet.

In practice, most organisations converge on a stack: ISO 42001 as the management system, NIST AI RMF as an organising model for risk, OECD principles as a statement of intent, and regulations like the EU AI Act as binding constraints.

How Do You Implement Responsible AI Governance Step By Step?

Implementation is not a single project. It is a programme with a predictable sequence. The following steps follow the structure of ISO 42001 and the stages most mature organisations move through.

Step 1: Define Scope and Context

Identify the AI systems in use or planned, the business units involved, the external parties affected, and the legal and regulatory requirements in play. This is Clause 4 of ISO 42001 and the foundation of every later decision.

Step 2: Set Leadership Direction and Policy

Appoint an executive sponsor, establish a cross functional AI governance forum, and publish an AI policy that commits to responsible use and sets the principles the organisation will operate by. This is Clause 5.

Step 3: Assess AI Risk and System Impact

Run an AI risk assessment (Clause 6.1.2) covering risks to the organisation, and an AI system impact assessment (Clause 6.1.4) covering impacts on individuals and society. These two assessments are different and both are required. See our guide on AI impact assessments for the practical detail.

Step 4: Implement Controls

Select and implement controls from Annex A (and beyond) to treat the risks and impacts identified. Cover all nine Annex A areas: policies, internal organisation, resources, impact assessments, life cycle, data, interested parties, use, and third parties.

Step 5: Document Everything

Every AI system needs a structured record: intended use, data sources, model information, performance metrics, limitations, risk treatments, and human oversight arrangements. Clause 7.5 requires this to be version controlled, approved, and accessible.

Step 6: Operate and Monitor

Run the AI systems under the controls you defined. Monitor performance, fairness, drift, and incidents. Capture evidence of control operation. This is Clause 8 (operations) and Clause 9.1 (monitoring).

Step 7: Audit and Review

Conduct internal audits against ISO 42001 (Clause 9.2) and review the programme at management level (Clause 9.3). Findings feed corrective actions and improvement (Clause 10).

Step 8: Improve Continually

Use incidents, audit findings, management review, stakeholder feedback, and changes in the external environment (new regulation, new AI capabilities, new threats) to evolve the programme. For a detailed walkthrough see our implementation guide.

What are Common Pitfalls in Responsible AI?

The same failure modes show up across industries. Recognising them early is the fastest way to avoid them.

- Policy without enforcement. A beautifully written AI policy that nobody operates against. If there are no attestations, approvals, or audit evidence, the policy exists on paper only.

- Model cards without updates. Documentation is produced at launch and never refreshed when the model, data, or use case changes. Auditors and regulators spot stale documentation quickly.

- Bias assessment without remediation. Teams run bias tests, log the findings, and ship anyway because there is no defined remediation path. Fairness becomes a box tick, not a control.

- Unauditable LLMs in production. Third party large language models are integrated into customer facing workflows with no logging, no prompt governance, and no evaluation framework. When something goes wrong, there is nothing to investigate with.

- Missing incident process. No defined AI incident definition, no escalation path, no link between AI incidents and the wider incident management programme. Lessons learned never flow back into controls.

- No human in the loop for high risk decisions. Systems making consequential decisions (hiring, credit, clinical triage) with rubber stamp human review that has neither the information nor the authority to intervene.

- Responsible AI as a one off project. A responsible AI programme is launched, certified, then left to decay. Without continual improvement, the programme drifts out of date within a year.

Each of these is a failure of governance, not technology. That is why future-proofing with responsible AI depends on the quality of the management system you build around it.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How ISMS.online Supports Responsible AI Governance

ISMS.online gives you the operating platform to run responsible AI governance as a managed programme, not a set of good intentions. Everything a responsible AI framework requires — policies, risk assessments, impact assessments, controls, evidence, audits — lives in one connected workspace, mapped to the clauses and controls of ISO 42001.

Here is how the platform maps to the eight principles and the implementation steps above:

- Pre-built AI Management System. A working AIMS aligned to the 10 clauses of ISO 42001, so your programme starts with structure rather than a blank page.

- Policy Packs for responsible AI. Pre-drafted AI policies covering fairness, transparency, accountability, and human oversight, with version control, approval workflows, and user attestations.

- AI risk and impact assessment registers. Separate, connected registers for Clause 6.1.2 AI risk and Clause 6.1.4 AI system impact, with scoring, treatment plans, owners, and review cycles.

- Annex A control library. All 38 controls across the 9 Annex A areas, ready to tailor, with evidence linking so fairness, transparency, safety, and oversight controls produce auditable artefacts.

- Documentation hub. Central home for model cards, intended use statements, validation reports, and stakeholder disclosures, all version controlled and access managed.

- Audit and management review workflows. Internal audits (Clause 9.2), management review (Clause 9.3), and corrective actions (Clause 10) as first class features, so responsible AI governance is continually improved rather than frozen at launch.

Why Choose ISMS.online for Responsible AI?

ISMS.online is purpose built for AI governance, not retrofitted onto an information security product. That matters when responsible AI is the outcome you need to evidence.

- Operationalises the eight principles. Every principle — fairness, accountability, transparency, safety, privacy, human oversight, inclusivity, robustness — has a home in the platform, linked to the clauses and controls that implement it.

- Purpose built AIMS. Pre-configured AI Management System covering all 10 clauses and 38 Annex A controls, so your team tailors rather than designs.

- Dual assessment tooling. Native support for both AI risk (Clause 6.1.2) and AI system impact (Clause 6.1.4), with scoring, treatment, and linkage to controls and evidence.

- Evidence you can audit. Controlled policies, model documentation, test results, and incident records in one auditable library, mapped to the relevant controls.

- Multi framework alignment. Build once and align to ISO 42001, NIST AI RMF, OECD principles, and EU AI Act requirements in a single platform.

- Assured Results Method. Proven implementation approach backed by human expertise, used by hundreds of organisations to reach audit ready and stay there.

Ready to see the platform in action? Book a demo to see how ISMS.online operationalises responsible AI governance across your organisation.

FAQs

What is responsible AI in simple terms?

Responsible AI is the practice of building, deploying, and using AI systems in a way that is safe, fair, transparent, accountable, and respectful of human rights. It combines a set of principles with the governance, policies, and controls needed to make those principles operate in practice — not just appear in a mission statement.

What is the difference between responsible AI and AI governance?

Responsible AI is the outcome — AI systems that meet agreed principles. AI governance is the system of accountability, oversight, policies, and controls that delivers that outcome. Responsible AI governance is the combined phrase: governing AI in a way that produces responsible outcomes, evidenced through documentation, controls, and audit.

What are the core principles of responsible AI?

Most frameworks converge on eight principles: fairness, accountability, transparency, safety, privacy, human oversight, inclusivity, and robustness. The exact wording varies between OECD, NIST, UNESCO, and ISO, but the intent is the same. Responsible AI governance requires all of them to be addressed, prioritised by the risk profile of each AI system.

Is ISO 42001 the right framework for responsible AI governance?

For most organisations, yes. ISO 42001 is the first certifiable international standard for AI management systems, and it encodes the consensus principles into a structured, auditable framework of clauses and controls. It integrates with ISO 27001 and other management system standards, and it provides the operating backbone that lets you demonstrate responsible AI to customers, regulators, and boards.

How does responsible AI governance relate to the EU AI Act?

The EU AI Act creates legally binding obligations for providers and deployers of AI systems operating in the EU, especially for high risk systems. A well designed responsible AI governance programme, built around ISO 42001, gives you most of the controls the EU AI Act expects — risk management, data governance, transparency, human oversight, accuracy, robustness, and cybersecurity — and the evidence trail to demonstrate compliance.

How long does it take to implement responsible AI governance?

For organisations with a mature management system (ISO 27001, ISO 9001) already in place, a baseline responsible AI programme aligned to ISO 42001 can be stood up in weeks rather than months, because much of the governance infrastructure is reusable. Organisations starting from scratch typically take 3 to 6 months to reach audit ready, depending on scope, number of AI systems, and internal resource. Maturity then increases over subsequent cycles as incidents, audits, and management review drive continual improvement.

Do we need responsible AI governance if we only use third party AI tools?

Yes. Responsible AI governance applies to organisations that develop, provide, or use AI systems. If your teams rely on third party large language models, copilots, or AI enabled SaaS, you still need intended use documentation, supplier due diligence, human oversight arrangements, and an incident process. Annex A.9 (use of AI systems) and Annex A.10 (third party and customer relationships) of ISO 42001 are designed for exactly this scenario.