What is AI governance software?

AI governance software is a category of tooling that helps organisations design, run, and evidence the controls, policies, risks, and assessments that sit around their use of artificial intelligence. It is the operational backbone for anyone who has to prove, to a board, regulator, auditor, or enterprise customer, that AI is being built or used responsibly.

In practical terms, AI governance software should give you a single place to:

- Maintain a live inventory of AI systems, models, and use cases

- Document and approve AI policies, standards, and acceptable use rules

- Run AI risk assessments and AI system impact assessments

- Manage a control library mapped to recognised frameworks such as ISO 42001, the EU AI Act, and the NIST AI Risk Management Framework

- Capture audit evidence against each control with owners, review cycles, and version history

- Plan, run, and report on internal audits and management reviews

- Track third party AI suppliers and the models they expose you to

You will see the category described under several closely related labels, including AI compliance software, AI compliance tools, AI governance tools, and AI governance platform. They all point at the same problem: giving AI governance a structured home so it can be operated at pace without sliding back into spreadsheets.

Do you need AI governance software?

Not every organisation needs a dedicated platform on day one. If you have one AI use case, one policy, and a single owner, a good document structure and a risk register tab can get you surprisingly far. The question is how long that holds.

You likely need AI governance software if any of the following are true:

- You are pursuing or planning ISO 42001 compliance or another formal AI governance certification

- You operate in a regulated sector (financial services, healthcare, legal, public sector) where AI use needs demonstrable oversight

- Enterprise customers are asking AI specific questions in procurement and due diligence

- You fall within scope of the EU AI Act for a high risk or general purpose AI system

- You have more than a handful of AI use cases across multiple teams

- You already run an ISO 27001 programme and want to layer AI governance on top without duplicating effort

- You are producing an AI Management System (AIMS) and need clause to control traceability

If any two of those apply, spreadsheets and shared drives will start to cost you more in manual effort than a platform costs outright. The right time to shortlist AI governance software is usually before the first external audit, not during it.

What should AI governance software do?

Every vendor in this space will claim they cover AI governance end to end. The category is new enough that there is genuine variation in what that actually means. Use the checklist below as a baseline. If a platform cannot credibly answer the vendor questions on the right, treat that as a gap.

| Capability | Why it matters | Questions to ask a vendor |

|---|---|---|

| AI policy management | AI policies must be documented, approved, communicated, reviewed, and attested to by users. Free floating Word documents fail audits. | Do you ship pre-drafted AI policy templates? How do approvals, version control, and user attestations work? |

| AI risk register | AI risk assessment is a distinct discipline under ISO 42001 Clause 6.1.2 and similar frameworks. It needs its own scoring model and treatment workflow. | Can I run an AI specific risk register separate from my information security risk register, with shared data where useful? |

| AI system impact assessments | Clause 6.1.4 of ISO 42001 and Article 27 of the EU AI Act both require structured impact assessments for AI systems. | Is there a dedicated impact assessment module, or is this a custom field on a risk form? |

| Control library | A pre-mapped control library saves months of manual work and ensures nothing is missed. For ISO 42001 that means all 38 Annex A controls across 9 areas. | Which frameworks are covered out of the box? Are the controls mapped to each other? |

| Evidence management | Audit evidence needs to be version controlled, access controlled, and tied directly to the control it supports. | How is evidence linked to controls and policies? Who can see what? What is the version history model? |

| Audit management | Internal audits, findings, corrective actions, and closure tracking are required by Clause 9.2 and equivalent clauses in adjacent standards. | Can I plan, execute, and close internal audits in the platform? Does it track corrective actions to completion? |

| Statement of Applicability | The Statement of Applicability is the auditor facing summary of which controls you apply and why. It should be live, not a Word document. | Is the SoA generated from live control data? Can I export it in a format auditors expect? |

| Supplier and third party management | AI supply chains are deep and fast moving. Annex A.10 and many regulatory regimes require documented supplier oversight. | Is there a supplier register with AI specific due diligence fields? Can I track model providers separately from general vendors? |

| Multi-standard support | Most AI governance programmes sit alongside ISO 27001, SOC 2, GDPR, and sector specific rules. Running each in a separate tool is expensive. | What other standards can I run on the same platform? How is data shared across them? |

| Integrations | Evidence often lives in other tools (Jira, GitHub, cloud providers, HR systems). Manual copy and paste is a maintenance tax. | What integrations exist today? Is there an API for custom evidence capture? |

| User roles and access | AI governance spans legal, risk, engineering, product, and compliance. Each audience needs the right view and the right edit rights. | What role based access controls are available? Can I limit visibility on sensitive impact assessments? |

| Reporting | Boards and auditors need structured reporting on control status, risk exposure, open actions, and certification readiness. | What reports ship out of the box? Can I build custom dashboards? |

This is the floor, not the ceiling. Platforms that clear all twelve capabilities comfortably will give you genuine operating leverage. Platforms that clear fewer than eight should probably not make your shortlist.

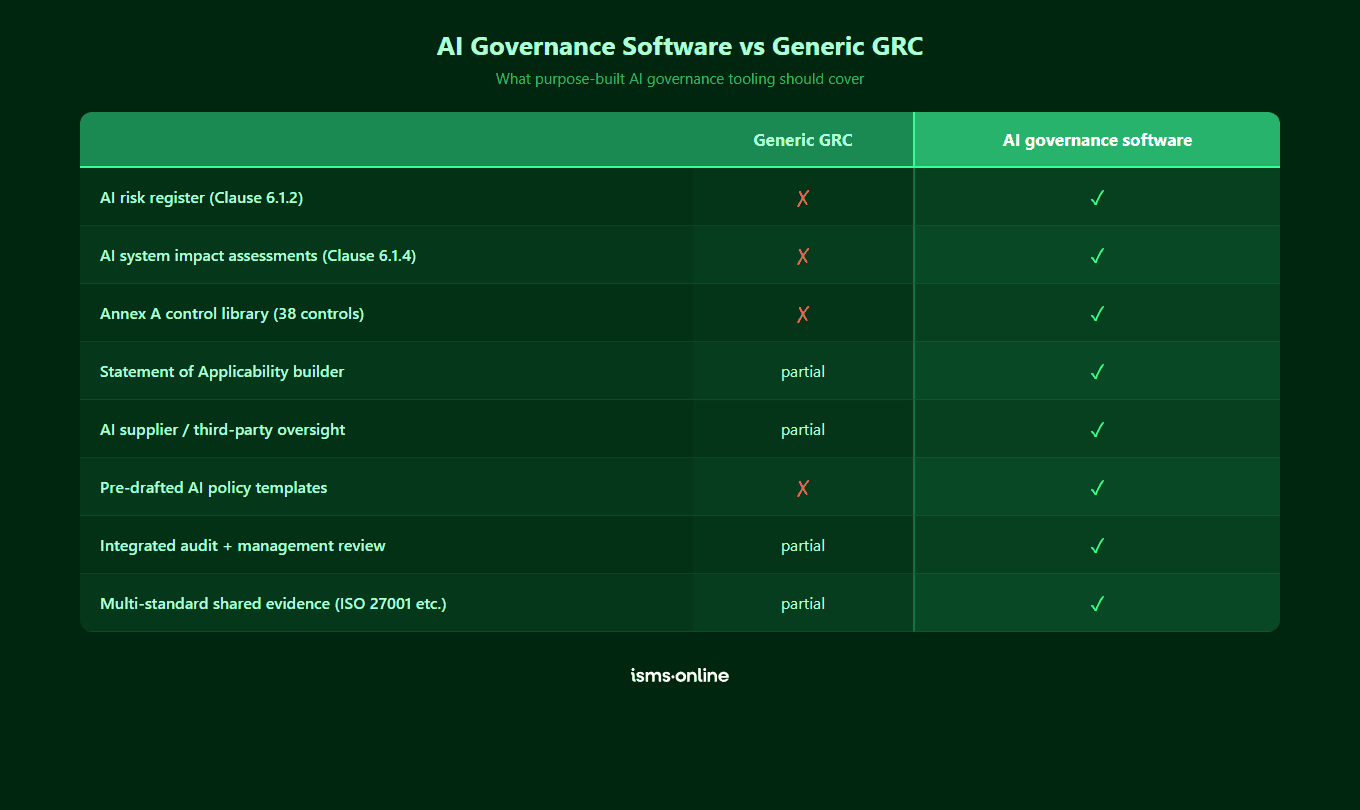

How is AI governance software different from generic GRC tools?

Plenty of established governance, risk, and compliance platforms will tell you they do AI governance. Some now genuinely do. Many do not, and the difference matters.

A generic GRC tool built around ISO 27001, SOC 2, and enterprise risk management typically lacks four things that AI governance requires:

- A native AI asset model. AI systems, models, training data, and use cases are not the same as information assets. Shoehorning them into an information security asset register loses the nuance auditors and regulators care about.

- AI specific risk and impact structures. ISO 42001 deliberately separates AI risk (Clause 6.1.2) from AI system impact assessment (Clause 6.1.4). Generic GRC tools usually offer one risk register and a custom field labelled impact, which is not the same thing.

- A life cycle view of AI systems. Annex A.6 covers objectives, design, development, deployment, operation, and validation. That is a workflow, not a checklist. Tools without a life cycle model make this awkward.

- Coverage of the fast moving AI regulatory landscape. The EU AI Act, US executive orders, NIST AI RMF, and sector rules are all moving quickly. AI native platforms update faster because AI is their focus.

This does not mean you should pick a single purpose AI governance tool at the expense of your wider compliance stack. The strongest option is usually a multi-standard platform that has genuinely invested in ISO 42001 and the wider AI governance space, so you get AI specific depth on top of the breadth of a mature GRC platform.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

How should you evaluate AI governance platforms?

Most AI governance software buying decisions go wrong in one of three ways. Teams buy on brand and discover the tool was built for something else. They buy on price and discover the implementation services cost more than the licence. Or they buy on a single feature demo and discover the rest of the platform is hollow.

The criteria below give you a more structured way to compare. Score each vendor from 1 (poor) to 5 (excellent), and pay close attention to the red flags column.

| Criterion | What good looks like | Red flag |

|---|---|---|

| Framework coverage | ISO 42001 is a first class framework with all 38 Annex A controls pre-mapped. EU AI Act and NIST AI RMF mappings available. | ISO 42001 is a custom framework you build yourself, or a thin wrapper around ISO 27001. |

| Pre-built content | Ready to adopt AI policies, risk libraries, impact assessment templates, and control guidance. | Empty template that asks you to write your own policies and scoring models from scratch. |

| Integration with ISO 27001 | Single platform runs both standards with shared risks, evidence, suppliers, and audits. | Separate instances, separate data, or manual export and import between the two. |

| Time to value | You can demonstrate a working AIMS within weeks, not quarters. | Vendor insists on a six figure implementation programme before anything runs. |

| Implementation methodology | A documented approach with playbooks, templates, and human onboarding. | You are on your own, or handed to a partner you did not choose. |

| Audit readiness | Auditor friendly exports, live Statement of Applicability, evidence linked at control level. | Evidence lives in folders that are not linked to the controls they support. |

| Pricing transparency | Clear pricing tiers tied to users, entities, and modules. Quoted fast. | Custom quotes that take weeks, aggressive multi year lock in, hidden per seat fees. |

| Product roadmap | Frequent releases, public changelog, AI specific features shipped in the last 90 days. | Generic roadmap slides, no evidence of AI investment in the last year. |

| Support model | Real humans, fast response, proactive check ins, customer success coverage. | Ticket only support with a 48 hour SLA and no named contact. |

| Customer proof | Named reference customers in your sector, case studies that reference ISO 42001 specifically. | Generic logos, no customers who have achieved AI governance certification with the tool. |

Set thresholds before you start demos. For example, a minimum of 4 out of 5 on framework coverage, pre-built content, and audit readiness, and no 1s anywhere. That makes the final decision much less emotional.

What does an AI governance software buying process look like?

A well run AI governance software procurement usually takes six to ten weeks. Shorter than that and you are probably missing red flags. Much longer and you are probably over engineering the decision for a category that is moving fast.

A sensible sequence looks like this:

- Define scope and use cases. Document the AI systems in scope, the frameworks you are targeting (ISO 42001, EU AI Act, NIST AI RMF, sector rules), the audiences who need access, and the integration points with your existing compliance stack.

- Build the capability checklist. Use the 12 capabilities above as a baseline and add anything specific to your environment, such as sector regulators or existing tooling you must integrate with.

- Build a shortlist of 3 to 5 vendors. Include at least one AI native platform, at least one multi-standard GRC platform with a genuine AI story, and a reference point such as building it yourself or extending a current tool.

- Scripted demos. Send each vendor the same scenario (a specific AI use case you run today) and ask them to demonstrate how the platform would handle policy, risk, impact assessment, controls, evidence, and audit for it. Avoid generic product tours.

- Reference calls. Speak to at least one customer per shortlisted vendor who is running ISO 42001 or a comparable AI governance programme. Ask about time to first audit, implementation pain, and ongoing effort.

- Commercial negotiation. Insist on pricing clarity, implementation scope, support response times, and a clear exit clause.

- Pilot or phased rollout. Start with the highest risk AI use case or the first framework on your target list. Prove the platform in that narrow scope before rolling wider.

Teams that treat AI governance software as another checkbox procurement tend to regret it. Teams that treat it as the operating model for a multi year AI compliance programme make better decisions and move faster after signature.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How ISMS.online fits into the AI governance software landscape

ISMS.online is a multi-standard governance, risk, and compliance platform with a purpose built AI Management System designed around ISO 42001. That combination matters because most organisations adopting ISO 42001 are already running ISO 27001, and increasingly SOC 2, GDPR, and NIS 2 on the side. Using the same platform means shared risks, shared evidence, shared audits, and one management review.

Within the AI governance category specifically, the platform ships with:

- A pre-configured AIMS framework mapped to all 10 clauses of ISO 42001

- The full Annex A control library (38 controls across 9 areas, A.2 through A.10)

- Dedicated registers for AI risk (Clause 6.1.2) and AI system impact assessment (Clause 6.1.4)

- Policy Packs with pre-drafted AI policies, approval workflows, and user attestations

- A live Statement of Applicability builder

- Integrated internal ISO 42001 audit management, findings, and corrective actions

- A supplier register covering the third party obligations in Annex A.10

- Shared data with ISO 42001 vs ISO 27001 implementations, so integrated management systems are the default

For a product focused deep dive into how the platform implements ISO 42001 specifically, see the dedicated ISO 42001 software page. This page is deliberately a category guide, not a product brochure. Use the checklist above to test any vendor you speak to, including us.

Why Choose ISMS.online for AI Governance Software?

When buyers put ISMS.online next to the capability checklist and buying criteria in this guide, a handful of themes come up consistently:

- Breadth plus depth. A multi-standard platform that covers ISO 27001, SOC 2, GDPR, NIS 2, and others, with genuine AI governance depth built around ISO 42001 rather than bolted on.

- Pre-built content. Policies, risk libraries, impact assessment templates, and control guidance are all ready to adopt, so teams start tailoring on day one instead of drafting from scratch.

- Every Annex A control mapped. All 38 ISO 42001 Annex A controls, across areas A.2 to A.10, are present with evidence slots, owners, and review cycles in place.

- Integrated audit experience. A live Statement of Applicability, linked evidence at control level, and internal audit workflows that auditors find easy to follow.

- Fast time to value. Most customers move from contract to working AIMS in weeks rather than quarters, supported by the Assured Results Method and hands-on onboarding.

- Human support. Real humans on chat and calls, not just a ticket queue, which matters when you are preparing for an external audit and need an answer inside the hour.

- Positioned as your platform, not your certifier. ISMS.online helps you achieve certification with accredited bodies. We are the operating platform behind your programme, not the people who sign the certificate.

Ready to see the platform in action? Book a demo.

FAQs

What is AI governance software?

AI governance software is a category of platform that helps organisations manage the policies, risks, controls, assessments, and audit evidence associated with their use of artificial intelligence. It gives AI governance a single operational home rather than leaving it scattered across spreadsheets, shared drives, and email threads. Common synonyms include AI compliance software, AI compliance tools, AI governance tools, and AI governance platform.

How is AI governance software different from AI compliance tools?

In practice the terms are used interchangeably. AI governance software tends to emphasise the full management system (policies, roles, culture, life cycle) while AI compliance tools can imply a narrower focus on specific regulations such as the EU AI Act. The best platforms cover both. When comparing vendors, focus on capabilities rather than labels.

Can a generic GRC platform cover AI governance?

Some can, many cannot yet. Look for AI native asset models, dedicated AI risk and AI system impact assessment registers, a control library that includes all 38 ISO 42001 Annex A controls, and recent product investment in AI specific features. If the platform treats AI as a custom field on an information security asset, it will not hold up at audit.

Do I need AI governance software for the EU AI Act?

Not strictly, but it makes compliance materially easier. The EU AI Act requires documented risk management, data governance, transparency, human oversight, and post market monitoring for high risk AI systems. AI governance software gives you structured registers and workflows for each of those obligations, with the audit trail regulators will expect.

How much does AI governance software cost?

Pricing varies widely. Entry level platforms start in the low five figures per year for a small organisation, while enterprise platforms can run well into six figures for large multi-entity programmes. Watch for implementation services, per user fees, and module based pricing that inflates the headline figure. Total cost of ownership over three years is a fairer comparison than the first year licence.

How long does implementation take?

For organisations that already run an ISO 27001 management system, a platform with pre-built ISO 42001 content can be usable within two to four weeks and audit ready within three to six months, depending on scope and AI use cases. Organisations starting from zero usually take six to nine months to reach audit ready. The biggest variable is internal resource, not the tool itself.

Can one platform run AI governance and ISO 27001?

Yes, and it is usually the right choice. Both ISO 42001 and ISO 27001 follow the Annex SL high level structure, and Annex D of ISO 42001 explicitly maps the two standards. A multi-standard platform lets you run a single risk register, a single evidence library, a single audit programme, and a combined management review, rather than duplicating the effort across two tools.