What Is AI Risk Management?

AI risk management is the structured, repeatable process an organisation uses to identify, assess, treat, and monitor risks that arise from the development, provision, or use of AI systems. It covers the full life cycle of an AI system, from problem framing and data sourcing through model design, validation, deployment, and eventual retirement.

Unlike traditional information security risk, which focuses primarily on confidentiality, integrity, and availability of information, AI risk management has to account for a wider set of concerns. Model bias, lack of explainability, data drift, misuse of outputs, societal impact, and the behaviour of third party foundation models all sit squarely within scope. That is why ISO 42001 requires a dedicated AI risk assessment process rather than folding AI risk into an existing infosec register.

Practically, AI risk management answers four questions for every AI use case in the business:

- What could go wrong with this AI system, for whom, and how badly?

- How likely is each of those outcomes given the controls we already have?

- What are we going to do about the risks that exceed our tolerance?

- How will we know, after deployment, whether our assessment is still valid?

Done well, it gives the board, the AI governance committee, and the engineering teams a shared view of which AI initiatives are safe to proceed, which need additional controls, and which should be paused or rescoped.

How Does ISO 42001 Handle AI Risk (Clause 6.1.2 vs Clause 6.1.4)?

ISO 42001 separates AI risk management into two related but distinct activities, and conflating them is one of the most common implementation mistakes.

Clause 6.1.2 — AI risk assessment. This is the traditional risk lens focused on the organisation itself. You identify AI related risks, analyse their likelihood and consequence against your organisational risk criteria, and decide how to treat them. It is the process that populates your AI risk register.

Clause 6.1.4 — AI system impact assessment. This is the outward looking lens. It assesses the potential impact of an AI system on individuals, groups of individuals, and society — covering fairness, safety, human oversight, and fundamental rights. Annex A.5 provides the control set that operationalises this. Detailed guidance is covered in our dedicated AI impact assessments page.

Both are normative. Both must be documented. Both feed into your Statement of Applicability and your AI policy. But they answer different questions, have different inputs, and typically involve different stakeholders. Running them as one combined exercise tends to drop the societal impact lens, which is exactly what auditors and regulators look for.

Normative guidance for both activities sits in Annex B guidance. Annex C provides informative guidance on AI-related risk sources, which is a useful starting taxonomy when you are first populating your register.

How Does It Relate to Information Security Risk (ISO 27005)?

AI risk management does not replace information security risk management. Many AI systems store, process, or transmit personal data and are hosted on the same infrastructure as other regulated workloads. ISO 27005 remains the right reference for the infosec risk process. The practical model is:

- Information security risks relating to the AI system (confidentiality, integrity, availability of training data, model artefacts, APIs) sit in the ISO 27001 risk register

- AI specific risks (bias, explainability, drift, unintended use, societal harm) sit in the AI risk register under Clause 6.1.2

- Cross-references link the two so a single risk event can be tracked across both lenses without duplication

The AI governance gap between information security and AI governance is precisely what ISO 42001 Clause 6.1.2 is designed to close.

Where Does NIST AI RMF Fit?

The NIST AI Risk Management Framework is a voluntary US framework organised around four functions: Govern, Map, Measure, and Manage. It is complementary to ISO 42001 rather than competing. Organisations already using NIST AI RMF can map its functions directly onto the ISO 42001 clauses (Govern aligns with Clauses 4 and 5, Map with Clause 6.1, Measure with Clause 9, Manage with Clauses 8 and 10). If you are building for an international audience, ISO 42001 gives you the certifiable management system. NIST AI RMF gives you a widely recognised vocabulary and useful playbooks for the underlying activities.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

What Are the Main Categories of AI Risk?

A good AI risk taxonomy prevents you from missing entire classes of risk. Annex C of ISO 42001 provides an informative list of AI-related risk sources that most organisations tailor to their own context. In practice, eight categories cover the vast majority of AI risks, and most entries in your register will map to one or more of them.

| Category | Example | ISO 42001 control(s) | Typical treatment |

|---|---|---|---|

| Bias and fairness | Credit scoring model systematically under-scores a protected group | A.6.2.2, A.6.2.4, A.7.4 | Mitigate via balanced training data, fairness testing, human review |

| Explainability and transparency | Medical triage model cannot justify its recommendations to clinicians | A.6.2.4, A.8.2, A.8.3 | Mitigate via interpretable models, documentation, model cards |

| Security | Prompt injection attack exfiltrates confidential data from an LLM | A.6.2.3, A.7.3, A.8.4 | Mitigate via input validation, guardrails, threat modelling |

| Privacy | Training data contains personal data used without a lawful basis | A.7.2, A.7.4, A.8.2 | Mitigate via data minimisation, anonymisation, DPIA alignment |

| Safety | Autonomous system causes physical harm through unexpected behaviour | A.6.2.4, A.9.3, A.9.4 | Mitigate via validation, staged rollout, kill switches, human oversight |

| Societal | Content moderation model amplifies misinformation or suppresses legitimate speech | A.5.2, A.5.3, A.5.4 | Mitigate via impact assessment, stakeholder engagement, ongoing review |

| Operational | Model drift causes forecast accuracy to degrade after six months in production | A.6.2.6, A.6.2.7, A.6.2.8 | Mitigate via monitoring, retraining triggers, performance thresholds |

| Supply chain | Foundation model provider changes behaviour without warning, breaking a downstream use case | A.10.2, A.10.3, A.10.4 | Mitigate via supplier due diligence, contracts, fallback providers |

Most organisations adopt this taxonomy as the backbone of their AI risk register, then add sector specific categories (for example, financial services adds model risk management under SR 11-7, healthcare adds clinical safety). The key is that every AI use case is screened against every category, even briefly, so risks are not missed by accident.

How Do You Conduct an AI Risk Assessment?

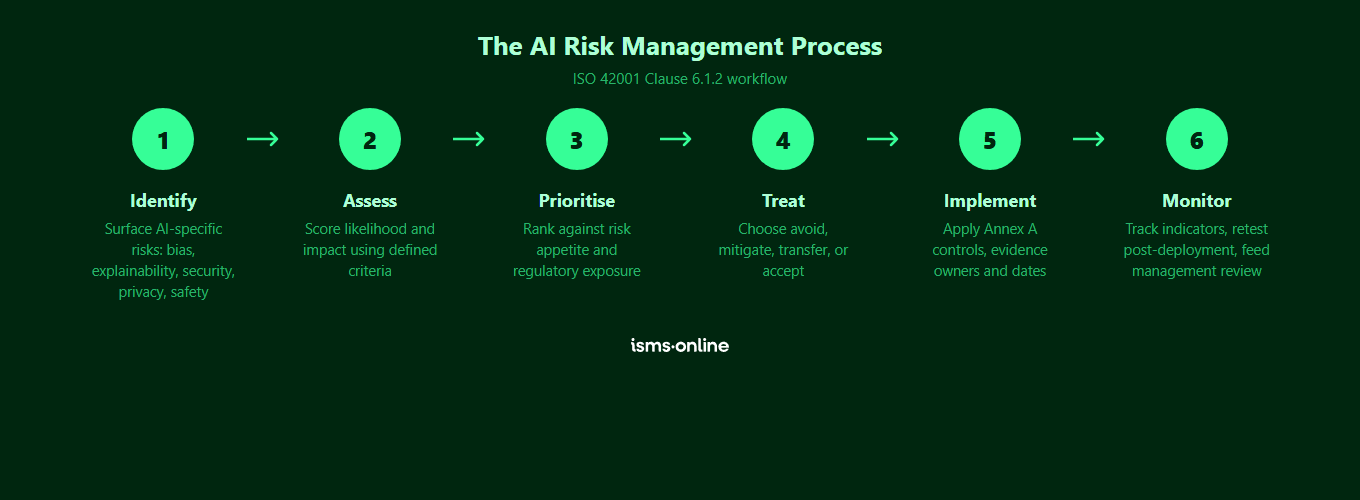

The assessment process under Clause 6.1.2 follows the familiar Plan-Do-Check-Act rhythm of ISO management systems, but with AI specific inputs at each stage. A practical, repeatable method has six steps.

Step 1: Define Scope and Risk Criteria

Before you assess anything, define what counts as an AI system in your organisation (this should already be in your AI Management System (AIMS) scope statement) and what risk criteria you will use. Risk criteria include your likelihood and consequence scales, your risk acceptance threshold, and the categories of consequence you care about (financial, operational, regulatory, reputational, safety, societal).

Step 2: Identify AI Use Cases and Assets

Inventory every AI system in scope. For each one, capture intended use, data inputs, model type (proprietary, fine-tuned, third party foundation model), users, affected parties, deployment environment, and criticality. This inventory is the foundation of the rest of the process.

Step 3: Identify Risks Using the Taxonomy

Walk each AI system through the eight risk categories above. Use Annex C risk sources, threat modelling techniques, and structured brainstorming with a cross-functional group (data science, engineering, security, legal, product, and where relevant the business unit that will use the output). Capture each risk as a specific event with a cause and consequence, not a generic label.

Step 4: Analyse Likelihood and Consequence

Score each risk against your criteria. Likelihood should consider the current control environment, not the uncontrolled state. Consequence should consider all affected parties, not just the organisation — this is where the bridge to Clause 6.1.4 impact assessment matters. Document your reasoning; an auditor will ask how you arrived at a high or low score.

Step 5: Evaluate Against Risk Criteria

Plot each risk on your matrix and compare against your risk acceptance threshold. Any risk above the threshold needs a treatment decision. Any risk at or below it can be accepted with an explicit rationale recorded in the register.

Step 6: Document and Review

The AI risk register is documented information under Clause 7.5. It needs owners, review cycles, version history, and approval. It feeds directly into the Statement of Applicability — controls from Annex A are selected and justified based on the risks in this register.

How Do You Treat AI Risks?

For every risk above your acceptance threshold, Clause 6.1.3 requires a treatment decision. The four classic options apply, with AI specific nuances:

- Avoid. Do not build, deploy, or use the AI system. Appropriate where the residual risk is unacceptable even with strong controls (for example, a use case that automates a decision with significant legal impact on individuals without a human in the loop).

- Mitigate. Apply controls to reduce likelihood, consequence, or both. This is the most common treatment. Controls are drawn from Annex A controls, supplemented with sector or use case specific measures. Typical mitigations include balanced training data, fairness testing, input and output guardrails, human oversight, staged rollout, and ongoing monitoring.

- Transfer. Shift risk through contract, insurance, or supplier responsibility. Useful for supply chain risks (for example, contractual SLAs with a foundation model provider) but be careful: you can transfer financial liability but rarely transfer accountability, especially to regulators.

- Accept. Retain the risk with explicit, documented justification and an owner. Only appropriate for risks at or below the acceptance threshold, or where treatment is demonstrably not cost effective and consequences are bounded.

Each treatment decision needs a named owner, a target date, and evidence of completion linked back to the register. A treatment plan without evidence of execution is one of the most common nonconformities in ISO 42001 certification audits.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How Do You Monitor AI Risks Post-Deployment?

AI risk is not static. A model that was safe at launch can become unsafe six months later because data drifts, user behaviour shifts, the regulatory environment changes, or an upstream foundation model is updated. Clause 9 performance evaluation and Annex A.6.2.6 to A.6.2.8 operational monitoring controls require you to keep watching.

A pragmatic monitoring programme has five signals:

- Model performance. Accuracy, precision, recall, calibration, and fairness metrics tracked against thresholds set at deployment. Breaches trigger retraining or rollback.

- Data drift. Statistical comparison of production input distributions against training distributions. Significant drift triggers an assessment of whether the model is still fit for purpose.

- Incidents and near misses. A formal channel for users, affected parties, and internal staff to report AI related concerns, with triage, root cause analysis, and corrective action logged under Clause 10.

- Control effectiveness. Periodic checks that the mitigations in the treatment plan are actually working. Annex A.6.2.6 explicitly requires verification of operational controls.

- External context. New regulation, new threat patterns, changes to third party models, and emerging best practice. This feeds back into the risk assessment at each scheduled review.

The outputs feed into management review (Clause 9.3) and drive updates to the risk register, the Statement of Applicability, and the AI policy. The cycle is continuous, not annual.

How Does ISMS.online Structure AI Risk Management?

ISMS.online provides a purpose built home for the full AI risk management process, aligned to Clause 6.1.2 and the normative guidance in Annex B. You get an AI risk register that is integrated with the rest of your AIMS rather than living in a separate spreadsheet.

The platform gives you:

- A dedicated AI risk register distinct from your information security risk register, with fields for AI specific attributes (use case, data sources, model type, affected parties, risk category) alongside standard likelihood, consequence, treatment, and owner fields

- A pre-loaded AI risk taxonomy covering the eight categories above plus Annex C risk sources, so teams start with a comprehensive list rather than a blank page

- Linked AI system impact assessments for Clause 6.1.4, so the outward facing impact view stays connected to the organisational risk view without duplication

- Direct control linking from every risk to the relevant Annex A controls, to the evidence that demonstrates the treatment, and through to the Statement of Applicability

- Review workflows and automated reminders so risks are reviewed on their defined cycle, not when someone remembers

- Cross-reference with ISO 27001 risks where an event has both information security and AI dimensions, preventing double-counting and contradictory treatment plans

- Reporting views for the board, the AI governance committee, and certification auditors, produced from the same underlying data

The result is an AI risk process that an auditor can walk end to end in under ten minutes, and that the organisation can actually operate between audits.

Why Choose ISMS.online for AI Risk Management?

Most GRC tools treat AI risk as an afterthought bolted onto an infosec model. ISMS.online was designed with AI governance as a first class capability. Here is what that means in practice:

- Separate but connected registers. AI risk (Clause 6.1.2), AI system impact assessment (Clause 6.1.4), and information security risk (ISO 27005) sit in distinct but cross-referenced registers, so each lens stays sharp while data is shared where it should be.

- Pre-built AI risk taxonomy. Annex C risk sources and the eight category model are pre-loaded, so your team identifies risks against a structured framework rather than inventing one.

- Live mapping to Annex A controls. Every risk links directly to the controls that treat it, the evidence that proves the treatment works, and the Statement of Applicability entry that justifies it.

- Built for Annex B guidance. The workflow follows the normative implementation guidance in Annex B, so your process is standards aligned by default rather than requiring bespoke configuration.

- Integrated with the full AIMS. Risks connect to your AI policy, your impact assessments, your audit programme (Clause 9.2), and your corrective actions (Clause 10), so nothing falls through the gap.

- Assured Results Method. A proven implementation path and human support that has helped hundreds of organisations reach certification first time, with AI risk management embedded from the start rather than bolted on late.

Whether you are building your first AI risk register or maturing an existing one, ISMS.online gives you the structure and the tooling to operate Clause 6.1.2 as a living process. For wider context, see our implementation guide.

Ready to see the platform in action? Book a demo.

FAQs

What is the difference between AI risk assessment (Clause 6.1.2) and AI system impact assessment (Clause 6.1.4)?

Clause 6.1.2 is the inward facing organisational risk lens — what could go wrong for the organisation, its objectives, and its operations, and how will we treat it. Clause 6.1.4 is the outward facing lens — what could the AI system do to individuals, groups, and society, covering fairness, safety, and fundamental rights. Both are normative, both must be documented, and they feed each other. Running them as one combined activity usually means the societal impact lens is lost, which is a common audit finding.

Can I reuse my ISO 27001 risk register for AI risk management?

No, not as a single combined register. Information security risk (ISO 27005) and AI risk (ISO 42001 Clause 6.1.2) have overlapping but distinct scopes. Infosec covers confidentiality, integrity, and availability of information. AI risk additionally covers bias, explainability, drift, safety, and societal impact — concerns that do not fit neatly into a CIA model. The right pattern is two connected registers with cross-references where a single event has both dimensions. ISMS.online supports exactly this arrangement.

Is NIST AI RMF an alternative to ISO 42001 for risk management?

They are complementary, not alternatives. NIST AI RMF is a voluntary US framework organised around Govern, Map, Measure, and Manage functions, with excellent practitioner guidance. ISO 42001 is an internationally certifiable management system standard. Organisations often use NIST AI RMF vocabulary and playbooks to execute the activities that ISO 42001 requires. The two map cleanly onto each other and many organisations implement both in parallel.

What categories of AI risk should I cover in my register?

At minimum: bias and fairness, explainability and transparency, security, privacy, safety, societal impact, operational (drift, performance, availability), and supply chain (third party models, data providers). Annex C of ISO 42001 provides an informative list of AI-related risk sources that aligns with this taxonomy. Sector specific categories such as model risk management for financial services or clinical safety for healthcare should be added where relevant.

How often should I review the AI risk register?

At least annually as a full review, feeding into management review under Clause 9.3. Individual risks should have their own review cycles based on criticality — typically quarterly for high risks and six monthly for medium risks. Any material change should trigger an ad-hoc review: new AI use case, significant model update, new regulation, a reported incident, or a change to a key third party provider. The register is a living document, not an annual compliance artefact.

Does ISO 42001 require automated AI risk monitoring?

The standard does not prescribe automation, but it does require ongoing monitoring of AI system performance and control effectiveness (Clause 9.1 and Annex A.6.2.6 to A.6.2.8). For anything beyond a handful of low-risk AI systems, automated monitoring of model performance, data drift, and control effectiveness is the only way to meet the requirement in practice. Manual monitoring at scale tends to produce stale data and missed incidents, which surface as nonconformities at surveillance audit.

Who should own AI risk management in the organisation?

Accountability should sit with a named executive, usually the CISO, Chief Risk Officer, or a dedicated Head of AI Governance, depending on organisational size and AI maturity. Day-to-day ownership sits with an AI governance committee or equivalent cross-functional group drawn from data science, engineering, security, legal, privacy, and the business units using AI. Individual risks each have a named owner responsible for treatment and monitoring. Clause 5.3 requires these roles, responsibilities, and authorities to be formally assigned and communicated.