Does the EU AI Act Apply to UK Businesses?

Yes, the EU AI Act applies to UK businesses if they place AI systems on the EU market, or if the output of their AI system is used in the EU — regardless of where the business is established. This extraterritorial reach mirrors the GDPR. UK businesses serving EU customers must comply with the EU AI Act obligations in addition to any UK sector-specific AI rules.

In short, Brexit does not exempt UK organisations from the EU AI Act. If your AI product is used by, marketed to, or generates outputs consumed in any of the 27 EU member states, you are in scope. The practical question is not whether you are in scope, but which obligations apply and how you evidence compliance.

For a full walkthrough of the Act itself, see our EU AI Act compliance hub. This page focuses specifically on UK applicability, obligations, and how ISO 42001 gives UK organisations a structured route to compliance.

When Does the EU AI Act Reach Beyond the EU?

Article 2 of the EU AI Act sets out its scope, and the extraterritorial triggers are broad. A UK business is in scope of the Act if any of the following are true:

- You are a provider placing an AI system on the EU market, irrespective of whether you are established in the EU. If your AI product or general-purpose AI (GPAI) model is put into service or made available to users in the EU, you are caught.

- You are a deployer established in the EU, or a deployer outside the EU whose AI system output is used in the EU.

- You are an importer or distributor placing a third-party AI system on the EU market from the UK.

- You are a product manufacturer placing an AI system on the EU market together with your product under your own name or trademark.

- You are an authorised representative of a non-EU provider, established in the EU.

The key phrase is “output used in the Union”. A UK SaaS company whose AI feature is accessed by an EU customer, or whose model produces recommendations consumed by an EU business, is in scope even without any EU entity, server, or employee.

How the Extraterritorial Reach Compares to GDPR

UK teams already familiar with the GDPR’s extraterritorial scope will find the pattern familiar. Where GDPR reaches non-EU controllers that offer goods or services to EU data subjects or monitor their behaviour, the EU AI Act reaches non-EU providers and deployers whose AI systems or outputs touch the EU market. The practical lesson is the same: geography of incorporation does not define scope. Where your users and outputs go does.

What Are the UK’s Own AI Rules?

The UK has deliberately taken a different path. Rather than a single horizontal AI law, the UK Government’s 2023 White Paper “A pro-innovation approach to AI regulation” set out a sector-led, principles-based framework. The approach rests on five cross-sectoral principles:

- Safety, security, and robustness

- Appropriate transparency and explainability

- Fairness

- Accountability and governance

- Contestability and redress

These principles are implemented by existing UK regulators (ICO, FCA, Ofcom, CMA, MHRA and others) within their existing remits, rather than by a new statutory AI authority. The UK also established the AI Safety Institute (now the AI Security Institute) to evaluate frontier models, and has signalled targeted legislation for the most capable models while keeping the broader regime principles-based.

For UK organisations, this creates a twin-track reality:

- UK-only activity: sector regulator expectations, existing law (data protection, equalities, consumer, product safety, financial services), and the cross-sectoral principles.

- EU market activity: the full EU AI Act risk-tier regime, on top of the UK obligations.

An AI governance programme that only addresses UK expectations is insufficient for any business with EU exposure. Equally, an EU AI Act project that ignores UK regulator expectations misses a large part of the picture for a UK-headquartered organisation. Our ISO 42001 vs EU AI Act comparison explains how a single management system can satisfy both.

Which UK Businesses Need to Comply with the EU AI Act?

Not every UK business is automatically in scope. The Act applies to specific roles across the AI value chain. UK organisations should work through the following test.

| Role under the EU AI Act | Typical UK business example | In scope if… |

|---|---|---|

| Provider | UK SaaS or AI product company selling to EU customers | You develop an AI system or GPAI model and place it on the EU market, or put it into service in the EU, under your own name or trademark |

| Deployer | UK enterprise using an AI system where outputs affect EU users | You use an AI system under your authority and the output is used in the EU (for example, screening EU job candidates or processing EU customer queries) |

| Importer | UK reseller bringing a non-EU AI product into the EU | You place an AI system from a non-EU provider on the EU market |

| Distributor | UK partner making an AI system available in the EU supply chain | You make an AI system available on the EU market without changing its properties |

| Product manufacturer | UK manufacturer embedding AI into a regulated product (medical device, vehicle component) | You place the product with the AI system on the EU market under your name |

| Authorised representative | EU-established entity acting for a UK provider | You are formally appointed by a UK provider of a high-risk AI system or GPAI model |

A UK business can hold more than one role. A UK scale-up that builds its own model, embeds it in a product, and also resells third-party AI features will be a provider, deployer, and potentially a distributor at the same time. Each role carries its own obligations, which is why a clear scoping exercise is the first practical step.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

What Are the Key EU AI Act Obligations for UK Providers and Deployers?

The EU AI Act classifies AI systems by risk: prohibited, high-risk, limited-risk, and minimal-risk, with a separate regime for general-purpose AI (GPAI) models. Obligations scale with risk. The heaviest obligations fall on providers of high-risk AI systems and providers of GPAI models with systemic risk.

Obligations on Providers

- Prohibited practices (Article 5): no UK provider may place on the EU market or put into service AI systems using prohibited techniques (for example, untargeted scraping of facial images to build recognition databases, social scoring, certain emotion recognition in workplaces and schools).

- High-risk AI systems (Chapter III): establish and maintain a risk management system across the lifecycle, ensure data governance for training, validation, and test datasets, produce technical documentation, keep automatic logs, ensure human oversight, achieve appropriate accuracy, robustness, and cybersecurity, register the system in the EU database, conduct conformity assessment, and affix CE marking.

- GPAI models: publish technical documentation, publish a sufficiently detailed summary of training content, implement a copyright policy, and, for models with systemic risk, conduct model evaluations, assess and mitigate systemic risks, track and report serious incidents, and ensure cybersecurity protection.

- Transparency (Article 50): inform users when they are interacting with an AI system, label deep fakes and AI-generated content, and flag AI-generated text on matters of public interest.

Obligations on Deployers

- Use high-risk AI systems in accordance with the instructions of use

- Assign human oversight to competent, trained individuals

- Ensure input data is relevant and sufficiently representative for the intended purpose

- Monitor the operation of the AI system and inform the provider and market surveillance authority of serious incidents and malfunctions

- Retain automatically generated logs for at least six months

- Conduct a fundamental rights impact assessment where required (certain public sector and essential service deployers)

- Inform workers’ representatives and affected workers before putting a high-risk AI system into service in the workplace

These obligations map cleanly onto an ISO-style management system. The controls under Annex A of ISO 42001 address data governance, lifecycle controls, impact assessment, human oversight, third-party relationships, and information to interested parties, which is why many UK organisations use ISO 42001 as the spine of their EU AI Act response. See our full Annex A controls reference for detail.

When Does the EU AI Act Come Into Force?

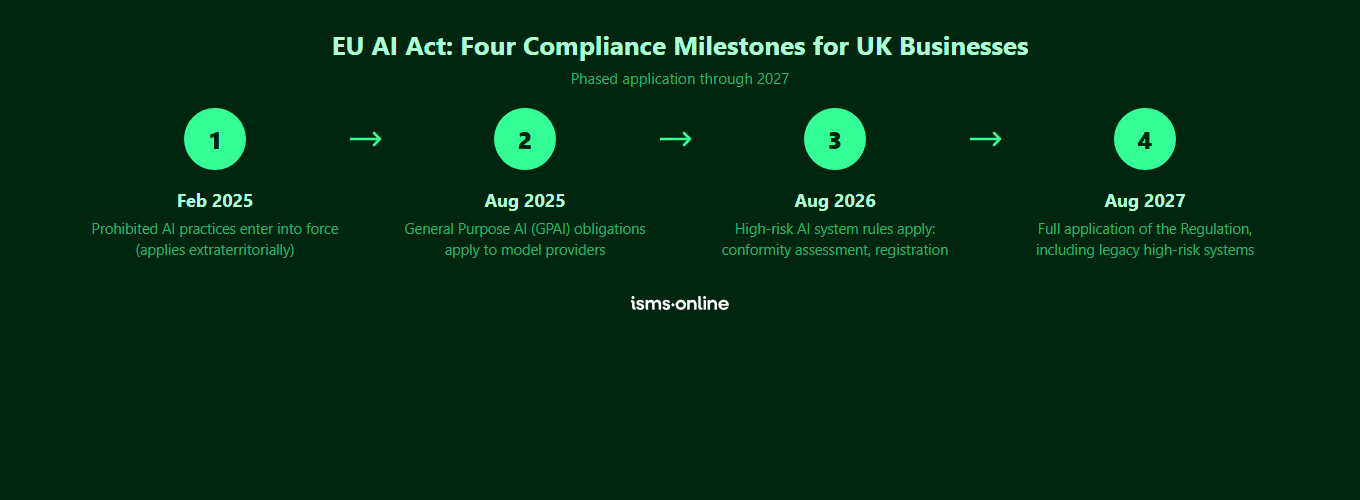

The EU AI Act entered into force on 1 August 2024, but its obligations apply in phases. UK organisations should plan against the following milestones.

| EU AI Act obligation | Effective date | Who it applies to | UK implication |

|---|---|---|---|

| Prohibited practices (Article 5) and AI literacy obligations (Article 4) | 2 February 2025 | All providers and deployers with EU exposure | UK providers must immediately remove prohibited use cases from EU-facing products and ensure staff have appropriate AI literacy |

| General-purpose AI (GPAI) model obligations and governance rules | 2 August 2025 | Providers of GPAI models placed on the EU market | UK AI labs and foundation model providers must publish technical documentation, training data summaries, and copyright policies for models available in the EU |

| High-risk AI system obligations (Annex III systems), penalties, and notified bodies | 2 August 2026 | Providers and deployers of high-risk AI systems | UK providers of high-risk systems (recruitment, credit scoring, essential services, law enforcement support, and similar) must complete conformity assessment before placing systems on the EU market |

| Full application, including high-risk AI embedded in regulated products (Annex I) | 2 August 2027 | Providers of AI embedded in products covered by EU harmonisation legislation | UK manufacturers of regulated products (medical devices, machinery, toys, vehicles) with AI components must meet EU AI Act requirements alongside existing CE marking |

The phasing matters for UK planning. Prohibited practices and AI literacy are already live. GPAI obligations are live for foundation-model providers. High-risk obligations arrive in August 2026, which is the practical deadline for most commercial AI products targeting EU customers. Waiting until 2027 is not an option. For a deeper read on each phase, see our guide on everything you need to know about the EU AI Act.

How Does ISO 42001 Help UK Businesses Comply with the EU AI Act?

ISO/IEC 42001:2023 is the first international standard for AI management systems. It is jurisdiction-neutral, built around 10 clauses of management-system requirements, 38 Annex A controls across 9 control areas, normative implementation guidance in Annex B, and mapping to other frameworks including the EU AI Act in Annex D.

For UK businesses facing both UK sector regulator expectations and the EU AI Act, ISO 42001 offers a single control framework that satisfies both. The overlap is substantial:

- AI policy and governance: ISO 42001 Clause 5.2 and Annex A.2 require a documented AI policy, roles, and responsibilities. This evidences UK accountability expectations and EU AI Act governance requirements.

- Risk management: Clause 6.1.2 requires an AI risk management process. This directly maps to the risk management system required for high-risk systems under Article 9 of the EU AI Act.

- AI system impact assessment: Clause 6.1.4 and Annex A.5 require impact assessments. This provides the structure for fundamental rights impact assessments required of certain deployers.

- Data governance: Annex A.7 addresses data acquisition, quality, provenance, and preparation. This aligns with the data governance requirements under Article 10 of the EU AI Act.

- AI lifecycle controls: Annex A.6 covers objectives, design, development, deployment, operation, and validation. This underpins the technical documentation, logging, accuracy, and robustness obligations in Articles 11 to 15.

- Transparency and information: Annex A.8 covers information for interested parties. This supports the Article 13 and Article 50 transparency requirements.

- Third-party and supplier management: Annex A.10 addresses supplier relationships. This is essential for deployers integrating third-party AI systems into EU-facing services.

- Human oversight and responsible use: Annex A.9 on use of AI systems supports the Article 14 human oversight obligations.

A certified ISO 42001 AI Management System (AIMS) does not, by itself, produce an EU AI Act conformity assessment. It does, however, provide the underlying management system, controls, and evidence that make a conformity assessment demonstrable rather than aspirational. Expect EU notified bodies and market surveillance authorities to treat a robust ISO 42001 AIMS as strong evidence of organisational AI governance maturity.

Get started easily with a personal product demo

One of our onboarding specialists will walk you through our platform to help you get started with confidence.

How ISMS.online Maps UK and EU AI Compliance in One Platform

ISMS.online gives UK organisations a single workspace to run ISO 42001, align to EU AI Act obligations, and evidence UK regulator expectations, without maintaining three parallel programmes. Key capabilities for the twin-track UK and EU reality include:

- Pre-configured AIMS. A working AI Management System aligned to the 10 clauses of ISO 42001, ready to tailor to your UK and EU scope on day one.

- Role-based scoping. Capture your EU AI Act roles (provider, deployer, importer, distributor) per AI system, so obligations are assigned against the right entity.

- AI risk register and impact assessment tooling. Dedicated registers for Clause 6.1.2 AI risk and Clause 6.1.4 AI system impact assessment, structured to evidence Article 9 EU AI Act risk management and fundamental rights impact assessments where required.

- Policy Packs aligned to Annex A.2. Pre-drafted AI policy templates with version control, approval workflows, and user attestations so policies are demonstrably live.

- Statement of Applicability builder. Maintain a live Statement of Applicability across all 38 Annex A controls, with cross-references to EU AI Act articles and UK regulator expectations.

- Audit management. Plan and run internal audits (Clause 9.2) tagged against ISO 42001, EU AI Act, and UK principles, with findings linked to corrective actions.

- Multi-standard integration. Shared risk, evidence, and policy data with ISO 27001 and other standards you already run, so AI governance lands as an extension of your existing management system, not a separate project.

The result is that when an EU customer asks how you address Article 9, 10, or 14, or when a UK regulator asks how you evidence fairness, accountability, and human oversight, the answer is a single drill-down in one platform, not an evidence-gathering exercise across five tools. See our implementation guide for the end-to-end adoption path.

Why Choose ISMS.online for EU AI Act Compliance?

ISMS.online is built to give UK organisations a practical route through the EU AI Act, anchored in ISO 42001, without stitching together spreadsheets and generic GRC tools. Here is what you get:

- A working AIMS on day one. Pre-configured framework covering all 10 clauses and 38 Annex A controls, so your team starts tailoring rather than designing from scratch.

- Clear EU role scoping. Capture provider, deployer, importer, and distributor status per AI system, so EU AI Act obligations are assigned correctly from the start.

- AI-specific risk and impact tooling. Dedicated registers for AI risk and AI system impact assessment, with scoring, treatment plans, and review cycles aligned to both ISO 42001 and EU AI Act expectations.

- Policy library with adoption tracking. Pre-drafted AI policies with approval workflows, user attestations, and real-time adoption reporting, so policy is demonstrably live for UK regulators and EU market surveillance authorities alike.

- Multi-standard shared data. One platform for ISO 42001, ISO 27001, and more, with shared risks, controls, and evidence, so you avoid duplicated effort for organisations running multiple management systems.

- Assured Results Method. Proven implementation approach that has helped hundreds of organisations achieve certification first time, backed by onboarding, adoption support, and live human help.

- UK-based support. A UK team that understands both UK sector regulator expectations and EU market obligations, with live help when scoping questions get hard.

Ready to see the platform in action? Book a demo.

FAQs

Does the EU AI Act apply to UK businesses that have no EU office?

Yes, if the AI system is placed on the EU market, put into service in the EU, or the output is used in the EU. A UK SaaS business with no EU entity, no EU staff, and no EU servers is still in scope if EU customers use the AI feature or consume its outputs. The test is where the AI system or its output lands, not where the business is registered.

Is there a UK equivalent of the EU AI Act?

Not as a single horizontal statute. The UK has adopted a pro-innovation, principles-based, sector-led approach, with cross-sectoral principles (safety, transparency, fairness, accountability, contestability) implemented by existing regulators. The Government has signalled targeted legislation for the most capable models. For now, UK AI obligations sit across existing law (data protection, equalities, product safety, financial services) and regulator guidance, rather than in a dedicated AI Act.

When do UK businesses need to comply with the EU AI Act?

Obligations apply in phases. Prohibited practices and AI literacy requirements have applied since 2 February 2025. General-purpose AI model obligations apply from 2 August 2025. High-risk AI system obligations apply from 2 August 2026. Full application, including AI embedded in regulated products, applies from 2 August 2027. UK businesses should treat August 2026 as the working deadline for most commercial AI products targeting EU customers.

Does ISO 42001 certification mean we are EU AI Act compliant?

Not automatically. ISO 42001 certification demonstrates that you have a mature AI management system that addresses governance, risk, lifecycle, data, transparency, and oversight. It provides strong evidence of organisational AI governance and maps closely to the EU AI Act, but the Act requires specific product-level conformity assessments and registrations for high-risk systems that sit on top of the management system. A certified ISO 42001 AIMS makes those conformity assessments demonstrable rather than aspirational.

Are UK deployers of third-party AI tools in scope of the EU AI Act?

Yes, if the output of the AI system is used in the EU. A UK business using a third-party AI tool to screen EU job candidates, recommend products to EU consumers, or process EU customer queries is a deployer under the Act. Deployer obligations are lighter than provider obligations but still include following instructions of use, ensuring human oversight, monitoring operation, retaining logs, and (in some cases) conducting a fundamental rights impact assessment.

What are the penalties for UK businesses that breach the EU AI Act?

Penalties are tiered and significant. Breaches involving prohibited AI practices can attract fines of up to EUR 35 million or 7 percent of worldwide annual turnover, whichever is higher. Non-compliance with most other obligations (high-risk system requirements, transparency, etc.) can attract fines of up to EUR 15 million or 3 percent of worldwide annual turnover. Supplying incorrect or misleading information to authorities can attract fines of up to EUR 7.5 million or 1 percent of worldwide annual turnover. SMEs and start-ups face the lower of the two figures. UK incorporation does not shield a business from these penalties where the Act applies.

How should a UK business start preparing for EU AI Act compliance?

Start with a scoping exercise: list every AI system you develop, deploy, import, or distribute, and identify which have EU exposure. For each, determine your role (provider, deployer, importer, distributor) and the risk tier (prohibited, high-risk, limited, minimal, or GPAI). Then adopt an ISO 42001 AI Management System as the backbone, so governance, risk, data, lifecycle, transparency, and oversight are managed in one framework. ISMS.online gives you a pre-built AIMS, the registers and policies you need, and a path to ISO 42001 certification that doubles as your EU AI Act readiness evidence.