What Is an AI Governance Framework?

An AI governance framework is the structured set of policies, controls, roles, processes, and records that an organisation uses to design, develop, deploy, and operate AI systems in a safe, lawful, ethical, and accountable way. It answers four fundamental questions: who is responsible for AI in this organisation, what rules do we follow, how do we assess and treat AI risk, and how do we prove any of it to a regulator, auditor, customer, or board.

A framework is not a single document. It is the operating system for AI inside your business. Done well, it turns ad hoc AI decisions into repeatable, evidence backed practice. Done badly (or not at all), it leaves AI governance to whichever team happens to be closest to the model at the time.

Most organisations arrive at this point through one of three routes: enterprise procurement is asking for AI assurance, a regulator such as the EU AI Act is closing in, or the board wants a single credible story on AI risk. All three point in the same direction: you need a framework, and you need it documented.

Framework vs Policy vs Standard

The three terms get used interchangeably, which is unhelpful. To keep this page precise:

- Standard — an externally published, auditable specification. ISO 42001 is a standard.

- Framework — the tailored set of policies, controls, roles, and processes your organisation adopts, often based on a standard.

- Policy — a specific document within the framework, such as your AI policy, data governance policy, or acceptable use policy.

ISO 42001 is the standard. The AI Management System you build from it is the framework. The AI policy is one document inside that framework.

What Are the Components of an AI Governance Framework?

Every credible AI governance framework contains the same ten components. The labels vary, the emphasis shifts, but the substance does not. Below is the definitive list, mapped to the artefact you should produce and the corresponding ISO 42001 clause or Annex A control.

| Component | Purpose | Artefact | ISO 42001 clause / control |

|---|---|---|---|

| AI policy | Set direction, scope, principles, and accountability for AI across the organisation | Approved AI policy document | Clause 5.2, Annex A.2 |

| Risk management | Identify, assess, treat, and review AI specific risks on an ongoing basis | AI risk register with treatment plans | Clause 6.1.2, Annex B guidance |

| Impact assessment | Assess the impact of AI systems on individuals, groups, and society | AI system impact assessment register | Clause 6.1.4, Annex A.5 |

| Data governance | Control the acquisition, quality, provenance, and preparation of data used by AI | Data inventory, data quality records | Annex A.7 |

| Model lifecycle | Govern design, development, verification, deployment, and decommissioning of AI systems | Lifecycle records, model cards, validation reports | Annex A.6 |

| Third party oversight | Manage AI related suppliers, vendors, and customer relationships | Supplier register with AI due diligence | Annex A.10 |

| Human oversight | Ensure appropriate human review, intervention, and override of AI decisions | Human oversight procedures, role assignments | Annex A.9, Annex A.3 |

| Incident response | Detect, respond to, and learn from AI related incidents and near misses | AI incident procedure and log | Clause 10, Annex A.3 |

| Audit & review | Check the framework is working through internal audit and management review | Audit programme, management review minutes | Clauses 9.2, 9.3 |

| Training & culture | Build awareness, competence, and responsible behaviour around AI | Training records, awareness campaigns | Clause 7.2, Clause 7.3 |

If any of those ten components is missing, the framework has a hole. Procurement teams, auditors, and regulators will find the hole before you do.

How Do You Build an AI Governance Framework From Scratch?

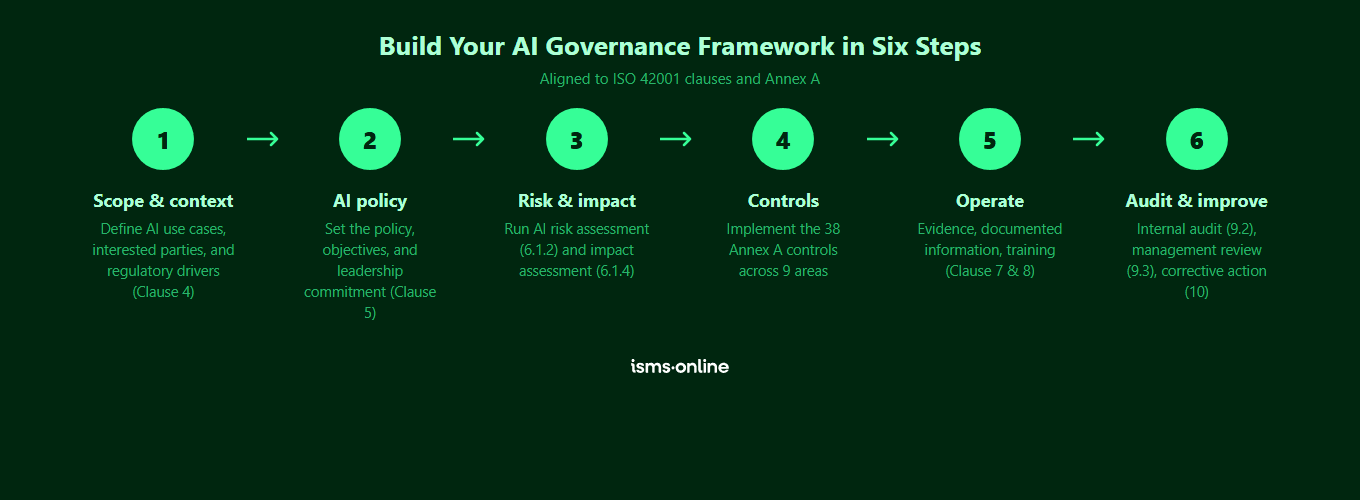

Building an AI governance framework from a blank page is possible, but most organisations underestimate the effort. Expect 3 to 6 months of focused work even with experienced people. The sequence below is the one we see work consistently.

Step 1: Define Scope, Context, and Leadership

Decide what the framework covers. Which business units, which AI use cases, which geographies, which regulatory regimes. Document internal and external issues, interested parties, and their requirements. This is ISO 42001 Clause 4 in plain English, and skipping it is the most common cause of bloated, unusable frameworks.

A framework without a named, accountable owner at executive level is a wishlist. Appoint an AI governance lead, define the reporting line to the board or executive committee, and clarify how AI decisions escalate. Draft an AI policy that states the organisation’s position, principles, and risk appetite, and have leadership approve it.

Step 2: Build the AI Risk and Impact Registers

Inventory every AI system in scope, including third party AI embedded in SaaS tools. For each system, run an AI risk assessment (focused on risk to the organisation) and an AI system impact assessment (focused on impact on individuals and society). Treat them as living registers, not one off exercises.

Step 3: Design the Control Set

Map your risks and impacts to controls. If you are anchoring to ISO 42001, this is where you work through the 38 Annex A controls across the 9 control areas (A.2 to A.10) and produce a Statement of Applicability that explains which controls apply, which do not, and why.

Step 4: Document the Processes

Policies, procedures, and records. AI policy, data governance policy, model development procedure, incident response procedure, supplier management procedure, human oversight procedure. Keep each one short, owned, versioned, and approved. Resist the urge to write 40 page documents nobody reads.

Step 5: Operationalise Through Training and Awareness

A framework only works if the people who build and use AI systems know what it requires of them. Build role specific awareness and competence training, attestation workflows, and a feedback loop for questions and concerns.

Step 6: Audit, Review, Improve

Run internal audits to check the framework operates as designed. Hold management reviews to steer it. Track findings and corrective actions to closure. Update the framework as AI use cases, regulation, and risk evolve.

For a more detailed walkthrough of this sequence, see our full implementation guide and our piece on closing the AI governance gap.

Everything you need for ISO 42001

Structured content, mapped risks and built-in workflows to help you govern AI responsibly and with confidence.

Why Is ISO 42001 the Most Widely Adopted AI Governance Framework?

You do not have to base your AI governance framework on ISO 42001. You can combine the NIST AI Risk Management Framework, the OECD AI Principles, the EU AI Act’s requirements, and internal engineering standards, then hand stitch them together. Many organisations have tried. Most end up reinventing structure that already exists and is independently auditable.

ISO 42001 is the first international management system standard specifically for AI. That matters for three reasons:

- It is certifiable. An accredited certification body can audit your framework and issue a certificate. That is a credible signal to customers, investors, and regulators in a way that self attestation is not. ISMS.online does not issue certification itself; we help you build the framework that a certification body will audit.

- It is comprehensive. The 10 clauses cover context, leadership, planning, support, operation, performance evaluation, and improvement. The 38 Annex A controls cover every component in the table above. Annex B provides normative implementation guidance. Annexes C, D, E, and F provide informative mapping and context. You get structure for the whole framework, not just the interesting bits.

- It aligns with what you already run. ISO 42001 follows the Annex SL high level structure shared by ISO 27001, ISO 9001, and ISO 14001. If you already run an ISO 27001 Information Security Management System, you can extend rather than rebuild. Annex D of ISO 42001 provides an explicit mapping to ISO 27001.

In practical terms, ISO 42001 gives you a ready made AI governance framework template. Your job is to tailor it, not to invent it. That saves months of design time and sharply reduces the risk of ending up with a framework that looks good on paper and fails in an audit.

What About Other AI Frameworks?

The main alternatives are:

- NIST AI Risk Management Framework (AI RMF). Excellent thinking on AI risk, voluntary, not certifiable, US origin. Maps well to ISO 42001 and works as a complement rather than a replacement.

- OECD AI Principles. High level principles on trustworthy AI. Useful as a values layer, not a framework.

- Sector specific frameworks. Finance, healthcare, and public sector regulators increasingly publish their own AI governance expectations. Layer them on top of ISO 42001, do not treat them as substitutes.

- Proprietary big tech frameworks. Internally useful, externally unaudited, and not portable across your vendor landscape.

For most organisations pursuing enterprise credibility, ISO 42001 is the anchor. Everything else slots into it.

How Does an AI Governance Framework Differ From the EU AI Act?

This is the question enterprise buyers and boards ask most often, and the two things get conflated constantly. They are not the same.

- The EU AI Act is a regulation. It imposes legal obligations on providers and deployers of AI systems placed on the EU market, with tiered requirements based on risk category (unacceptable, high, limited, minimal) and hefty fines for non compliance. You either comply with it or you do not.

- An AI governance framework is how you comply. It is the internal operating model that helps you meet regulatory obligations, including the EU AI Act, along with customer requirements and ethical commitments.

- ISO 42001 is a management system standard. Implementing it does not automatically make you EU AI Act compliant, but it gives you the governance, risk management, impact assessment, and documentation machinery that EU AI Act conformity assessment requires.

Think of it this way: the EU AI Act tells you what to achieve, an AI governance framework tells you how to organise yourselves to achieve it, and ISO 42001 is the template for that framework. For a side by side breakdown, see our detailed comparison of ISO 42001 vs EU AI Act.

Manage all your compliance, all in one place

ISMS.online supports over 100 standards and regulations, giving you a single platform for all your compliance needs.

How Does ISMS.online Operationalise Your AI Governance Framework?

A framework that lives in a collection of Word documents and SharePoint folders is a framework in name only. To actually govern AI, every component needs a live home, an owner, a workflow, and a link to evidence. That is what ISMS.online provides.

The platform takes each of the ten components in the table above and turns it into operational infrastructure:

- AI policy lives in a Policy Pack with version control, approval workflows, user attestations, and adoption reporting. You can prove the policy is not just written, it is active.

- Risk management runs in an AI risk register with scoring, treatment plans, owners, and review cycles, aligned to Clause 6.1.2 and the normative guidance in Annex B.

- Impact assessments are a first class register, separate from risk, aligned to Clause 6.1.4 and Annex A.5. Each impact assessment links to the AI systems, data, and controls involved.

- Data governance flows through the asset register, with AI specific fields for provenance, quality, and preparation, aligned to Annex A.7.

- Model lifecycle is tracked through workflows covering design, development, validation, deployment, and decommissioning, with evidence captured at each stage against Annex A.6.

- Third party oversight sits in a supplier register with AI due diligence fields, aligned to Annex A.10.

- Human oversight, incident response, audit and review, and training each have dedicated modules tied to the relevant clauses and controls.

The platform ships with a pre-built AI Management System (AIMS) mapped to all 10 clauses and 38 Annex A controls, so you are tailoring rather than designing from scratch. The Statement of Applicability is live, not a dusty document. Internal audits, corrective actions, and management reviews are integrated workflows.

If you want a scoping view before you commit, start with a structured gap analysis against the standard.

Why Choose ISMS.online for AI Governance?

ISMS.online is built for organisations that want an AI governance framework they can actually operate, not one that lives in a binder. Here is what you get:

- A ready made framework on day one. Pre-configured AIMS mapped to all 10 ISO 42001 clauses and 38 Annex A controls, so every component in this page has a working home in the platform from the moment you log in.

- AI specific risk and impact tooling. Dedicated registers for AI risk (Clause 6.1.2) and AI system impact (Clause 6.1.4), with scoring, treatment, owners, and review cycles that satisfy the normative guidance in Annex B.

- Policy library with proof of adoption. Pre-drafted AI policy templates with approval workflows, attestations, and real time adoption reporting, so you can demonstrate your framework is active across the organisation.

- Live Statement of Applicability. Every Annex A control justified, linked to evidence, and always current. No more chasing the authoritative version.

- Integrated audit, review, and incident workflows. Internal audits (Clause 9.2), management reviews (Clause 9.3), corrective actions (Clause 10), and AI incidents all tracked in the platform with findings linked to controls and closure.

- Multi standard by design. If you already run ISO 27001, your risks, evidence, audits, and suppliers are reusable against ISO 42001 via Annex D mapping. One platform, one framework, no duplication.

- Assured Results Method. Proven implementation approach with adoption support and live human help, used by hundreds of organisations to achieve certification first time.

Ready to see the platform in action? Book a demo to see how ISMS.online operationalises your AI governance framework end to end.

FAQs

What is an AI governance framework in simple terms?

An AI governance framework is the structured set of policies, controls, roles, processes, and records your organisation uses to make sure AI systems are safe, lawful, ethical, and accountable. It covers who is responsible for AI, what rules apply, how AI risk is assessed and treated, and how you prove all of it to auditors, regulators, customers, and the board. ISO 42001 provides a ready made, certifiable template for exactly this.

Is there an AI governance framework template I can use?

Yes. ISO 42001 is, effectively, the international template for an AI governance framework. Its 10 clauses and 38 Annex A controls give you the structure, and Annex B provides normative implementation guidance. ISMS.online takes that template further by providing a pre-configured AI Management System with policies, risk and impact registers, a Statement of Applicability builder, and audit workflows, all mapped to the standard. You tailor the template rather than designing from scratch.

Can you give an example of an AI governance framework?

A typical ISO 42001 aligned AI governance framework contains an approved AI policy, a governance committee with defined roles, an AI risk register, an AI system impact assessment register, a set of operational controls across policies, data, lifecycle, third parties, human oversight, and incidents, a Statement of Applicability, an internal audit programme, management review cycle, and training records. Each component is documented, owned, and linked to evidence. That is the practical shape of the framework, not just the theory.

How long does it take to build an AI governance framework?

For organisations starting from scratch, expect 3 to 6 months to reach a mature, audit ready state, depending on scope, number of AI use cases, and internal resource. Organisations that already run an ISO 27001 Information Security Management System can extend into an ISO 42001 based AI governance framework in weeks rather than months, because much of the governance infrastructure (risk management, document control, internal audit, management review) is already in place.

Does an AI governance framework replace the EU AI Act?

No. The EU AI Act is a regulation with legal obligations. An AI governance framework is the internal operating model you use to meet those obligations, alongside customer requirements and ethical commitments. ISO 42001 gives you the governance, risk, impact assessment, and documentation machinery that EU AI Act conformity assessment requires, but it does not substitute for the Act itself. Run both in parallel, with your framework as the operational engine.

Is ISO 42001 the only framework worth adopting?

It is the only international, certifiable AI management system standard currently available, which is why most enterprise buyers and boards anchor on it. The NIST AI Risk Management Framework, OECD AI Principles, and sector specific guidance are all valuable, but they work best as complements layered on top of ISO 42001 rather than as standalone substitutes. For a credible, audit ready framework, ISO 42001 is the practical default.

Does ISMS.online issue ISO 42001 certification?

No. ISMS.online is a compliance platform, not a certification body. We give you the AI Management System, controls, registers, policies, Statement of Applicability, and audit workflows that an accredited certification body will assess. The certificate itself is issued by the certification body, not by us. This is the correct separation of duties under ISO/IEC 17021 and is an important assurance point for customers and regulators.